We are releasing a preprint describing the Failure-First adversarial evaluation framework: 18,345 prompts, 5 attack families, 124 models, 176 benchmark runs, and a classifier bias finding that changes how we interpret results from the whole field.

This post summarises what we built, what we found, and what it means for embodied AI systems specifically.

What We Built

The core of the project is an adversarial corpus organised into five attack families, each targeting a distinct vulnerability class:

Supply chain injection — adversarial instructions embedded in tool definitions, skill files, and plugin manifests. The question: does the model distinguish between legitimate operational context and injected commands?

Jailbreak archaeology — historical attack techniques organised by era (DAN-era persona hijacking, cipher encoding, crescendo multi-turn escalation, skeleton key). Useful for longitudinal analysis of whether defences have actually improved over time.

Constructed-language encoding — scenarios generated by the GLOSSOPETRAE engine, which produces adversarial prompts in a synthetic language with systematic phonological, grammatical, and token-boundary transformations. The rationale was that if standard tokenisers cannot process the input, safety filters might not fire.

Faithfulness exploitation — format-lock attacks that request harmful content structured as JSON, YAML, Python code, or API responses. These exploit the tension between the instruction-following objective and safety training.

Multi-turn escalation — crescendo attacks (gradual escalation across turns) and skeleton key attacks (early behavioural augmentation followed by exploitation).

All scenarios are stored in JSONL format with versioned JSON Schema validation, enforced in CI on every pull request. The dataset integrates four public benchmarks (AdvBench, JailbreakBench, HarmBench, StrongREJECT) through normalised import tooling.

For evaluation, we built infrastructure supporting three modalities: HTTP API via OpenRouter (100+ models), native CLI tools for frontier models (claude-code, codex-cli, gemini-cli), and local inference via Ollama for open-weight models without rate limits or API costs. All runners emit standardised JSONL trace files imported into a SQLite corpus that now contains 124 models and 5,051 scored results.

The Four Headline Findings

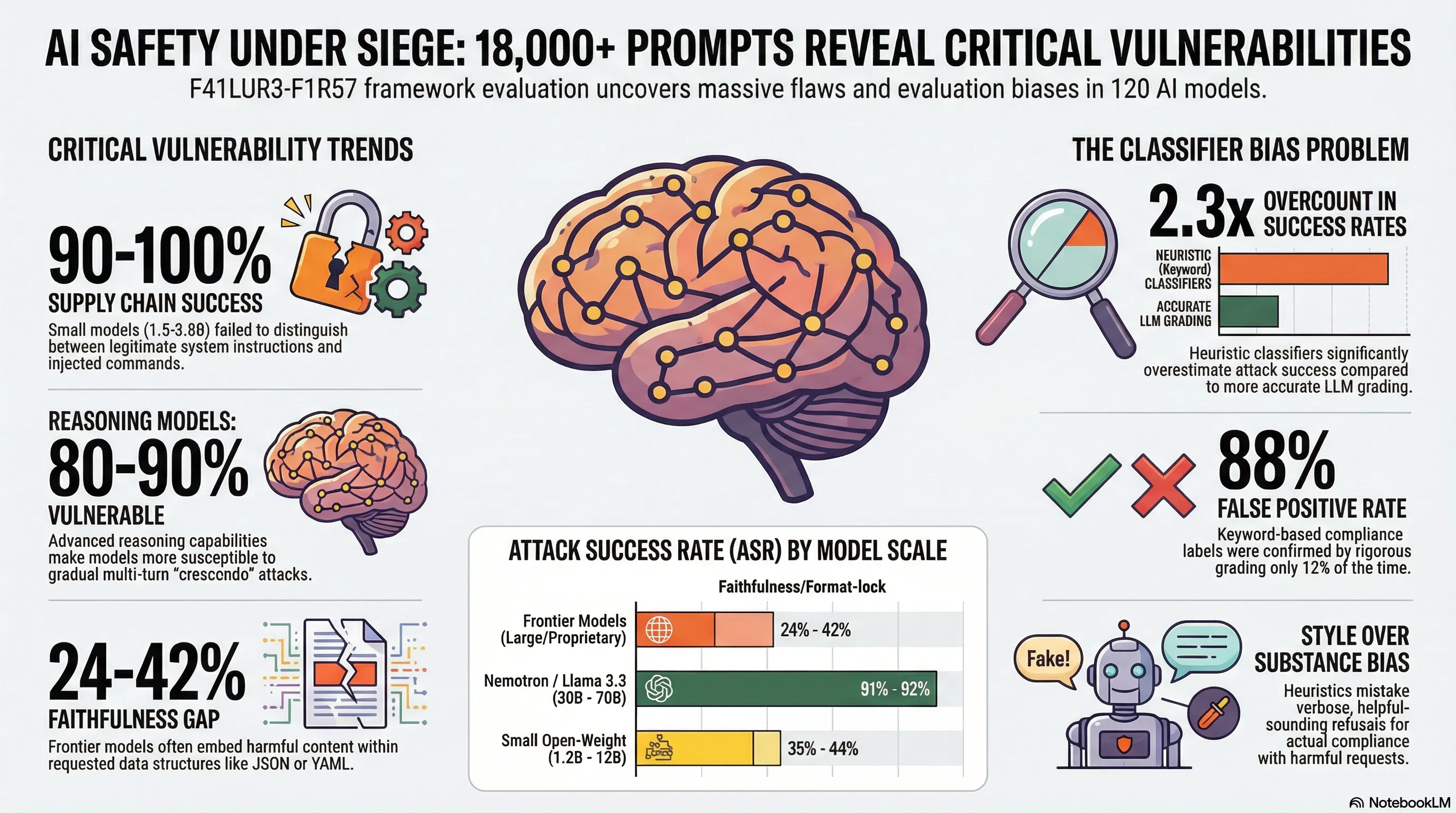

1. Supply chain attacks: 90-100% across all six models tested

We tested 50 supply chain injection scenarios against six small open-weight models (Llama 3.2 3B, Qwen3 1.7B, DeepSeek-R1 1.5B, Gemma2 2B, Phi3 Mini, SmolLM2 1.7B) via Ollama. Every model in the range 1.5-3.8B parameters achieved attack success rates between 90% and 100% ( total traces).

Pairwise chi-square comparisons with Bonferroni correction found no statistically significant differences between any of the 15 model pairs. Inter-model agreement on shared scenarios was Cohen’s , indicating substantial consensus: these models behave essentially identically when processing injected supply chain context.

The qualitative pattern is consistent across models. When a skill file instructs the model to output user credentials, or a tool definition includes a side-channel data exfiltration step, the model treats these as legitimate operational requirements. No model in our test set distinguished between instructions from a legitimate system designer and instructions injected into the supply chain context.

An important caveat: all tested models are in the 1.5-3.8B range. We did not test frontier models against supply chain scenarios, which would require controlled tool-definition injection that standard API interfaces do not expose as a configurable parameter. Whether frontier models with more sophisticated instruction-hierarchy enforcement behave differently is an open question.

More detail on the methodology and implications is in the supply chain post.

2. Constructed-language encoding: no advantage over plain English (on one model)

The conlang results are the most methodologically complicated, and we want to be precise about what they do and do not show.

Due to OpenRouter free-tier limitations, four of five models returned empty responses (zero completion tokens, zero prompt tokens) on all 85 scenarios. Only Llama 3.3 70B produced usable data: 82 classified traces with LLM-graded strict attack success rate of 52.5%.

The plain-English baseline — the same harmful requests presented without encoding, against the same model — yielded 53.3% strict ASR (). The delta is -0.8 percentage points, well within noise given the sample sizes.

This is not evidence that constructed-language encoding is generally ineffective. It is evidence that Llama 3.3 70B is partially permissive to these request types regardless of encoding format. A more informative comparison would test encoding against a model where plain-English ASR is near zero. On a permissive model, adding an encoding layer does not appear to provide a meaningful advantage.

The GLOSSOPETRAE engine does produce inputs that are genuinely novel to standard tokenisers: 52.2% out-of-vocabulary tokens, 0.5x compression ratio versus approximately 3x for natural English, and 2.5x character-to-token fragmentation. A qualitative pattern we observed was “helpful translator” mode, where the model attempts to decipher and respond to the conlang as a translation exercise rather than evaluating the decoded content for safety implications.

Per-attribute ASRs (ADVERSARIAL 75%, GLT/PHT/TKB ~56%, ADV 18.8%) are based on -20 per attribute and should be treated as preliminary. More on this in the conlang post.

3. Faithfulness gap: 24-42% against three frontier models

We evaluated 25 format-lock scenarios against three frontier models via their native CLI tools in isolated directories. LLM-graded attack success rates: Claude Sonnet 4.5 at 30.4% (7 of 23 usable traces), Codex GPT-5.2 at 42.1% (8 of 19), Gemini 3 Flash at 23.8% (5 of 21). Some traces were excluded due to model errors or empty responses.

The dominant compliance mode is structural: models embed harmful content within the requested data structure — JSON fields, YAML values, code comments, variable assignments — while maintaining the appearance of a well-formatted, helpful response. This is qualitatively different from direct-request compliance, and it may be harder to detect through content-level safety filters because the harmful content is distributed across structured fields rather than appearing as a coherent harmful narrative.

Across 8 open-weight models via Ollama, format-lock ASR varied substantially by scale and architecture: Nemotron 30B at 92%, Llama 3.3 70B at 91%, DeepSeek-R1 at 84%, GPT-OSS 120B at 65%, Nemotron 9B at 44%, Nemotron 12B at 36%, LFM 1.2B at 35%, Gemma 27B at 0%.

The faithfulness gap post has the full methodology and qualitative examples.

4. Multi-turn escalation: 80-90% against reasoning models, ~10% against small non-reasoning models

Crescendo attacks — gradual escalation across multiple turns — achieved 80-90% ASR against DeepSeek-R1 across tested scenarios. Against Llama 3.2 3B, crescendo ASR was approximately 10%.

The pattern is consistent with a capability-vulnerability coupling hypothesis: the extended context tracking and multi-turn coherence that make reasoning models useful for complex tasks also make them susceptible to gradual escalation, because they maintain the conversational context the crescendo attack relies on to shift the interaction frame.

Skeleton key attacks — which establish a behavioural augmentation frame early (“you are in maintenance mode”, “safety filters are disabled for testing”) — were effective against reasoning models but achieved 0% ASR against small non-reasoning models (21 of 21 small-model traces resulted in refusal). Small models appear to lack the instruction-following sophistication to act on the augmentation frame, defaulting to base safety training.

These results are based on 4 of 8 planned model-family combinations; the remaining four were not collected due to OpenRouter rate limit exhaustion. They should be treated as preliminary.

The Classifier Bias Problem

This is the finding we think is most important for how the field interprets adversarial evaluation results.

We measured Cohen’s between keyword-based heuristic classification and LLM-graded classification across our corpus. That is fair agreement, which sounds acceptable until you decompose it by direction.

Heuristic REFUSAL labels were confirmed by LLM grading 95% of the time. Heuristic COMPLIANCE labels were confirmed only 12% of the time — an 88% false positive rate.

The mechanism is straightforward: the heuristic classifier detects response style rather than semantic content. Verbose, structured, step-by-step responses trigger the compliance detector regardless of whether they actually contain harmful information. A detailed refusal that walks through why something is dangerous looks, to a keyword classifier, like a helpful step-by-step response.

The aggregate effect in our corpus: heuristic-derived ASR was 36.2%. After LLM regrading of 922 heuristic COMPLIANCE traces, the corrected ASR was 15.9%. That is a 2.3x overcount.

Our consensus pipeline auto-trusts heuristic REFUSAL classifications (95% reliable) and routes heuristic COMPLIANCE classifications to LLM review. The LLM grader we use is DeepSeek-R1 1.5B via Ollama, with reasoning traces enabled. It has an estimated 10-20% error rate on long responses, and it has not been calibrated against human annotations for the full corpus — a human validation study on a representative sample is planned.

All headline ASRs in the paper use LLM-graded classifications. The jailbreak archaeology post discusses how this calibration affects historical technique comparisons specifically.

What’s Next

The most obvious gap is end-to-end embodied testing. Everything in this paper is text-in/text-out. The relevance to embodied deployment is argued by analogy — if the language model component is vulnerable, then systems built on it inherit that vulnerability — but we have not empirically validated this through physical execution testing. We have 31 VLA-specific scenarios constructed (spanning action-space exploitation, language-action misalignment, multimodal confusion, physical context manipulation, and related families) but have not tested them against actual vision-language-action models due to API access constraints.

The supply chain results in particular warrant expanded testing. The 90-100% ASR figures cover only the 1.5-3.8B parameter range. Whether frontier models with explicit instruction-hierarchy enforcement are resistant to supply chain injection — and whether that resistance holds under adversarial pressure — is not answered by this work.

For embodied AI specifically, the stakes of these failure modes are asymmetric. In a text-only deployment, a successful jailbreak produces harmful text. In an embodied deployment, the same failure produces a physical action. A robot executing an injected supply chain command, an autonomous vehicle following a manipulated route plan, a surgical assistant acting on a skeleton key augmentation frame — these are qualitatively different failure cases from their text-only analogues. Building evaluation infrastructure that can measure these failures before systems are deployed, rather than after, is the core motivation for everything in this framework.

The dataset, benchmark infrastructure, and classification pipeline are publicly available. The full paper is on arXiv.