Tacmap: Bridging the Tactile Sim-to-Real Gap via Geometry-Consistent Penetration Depth Map

Tacmap introduces a geometry-consistent penetration depth map framework that bridges the tactile sim-to-real gap by unifying simulation and real-world tactile sensing through a shared volumetric deform map representation.

Tacmap: Bridging the Tactile Sim-to-Real Gap via Geometry-Consistent Penetration Depth Map

Introduction: The Tactile Bottleneck

For robots to move beyond basic pick-and-place toward true human-like dexterity, tactile perception is non-negotiable. Vision-Based Tactile Sensors (VBTS), such as GelSight and DIGIT, have emerged as the standard for high-resolution feedback, utilizing internal cameras to monitor the deformation of an elastomer membrane. This provides a rich stream of geometric and force data that far surpasses traditional taxel-based sensors.

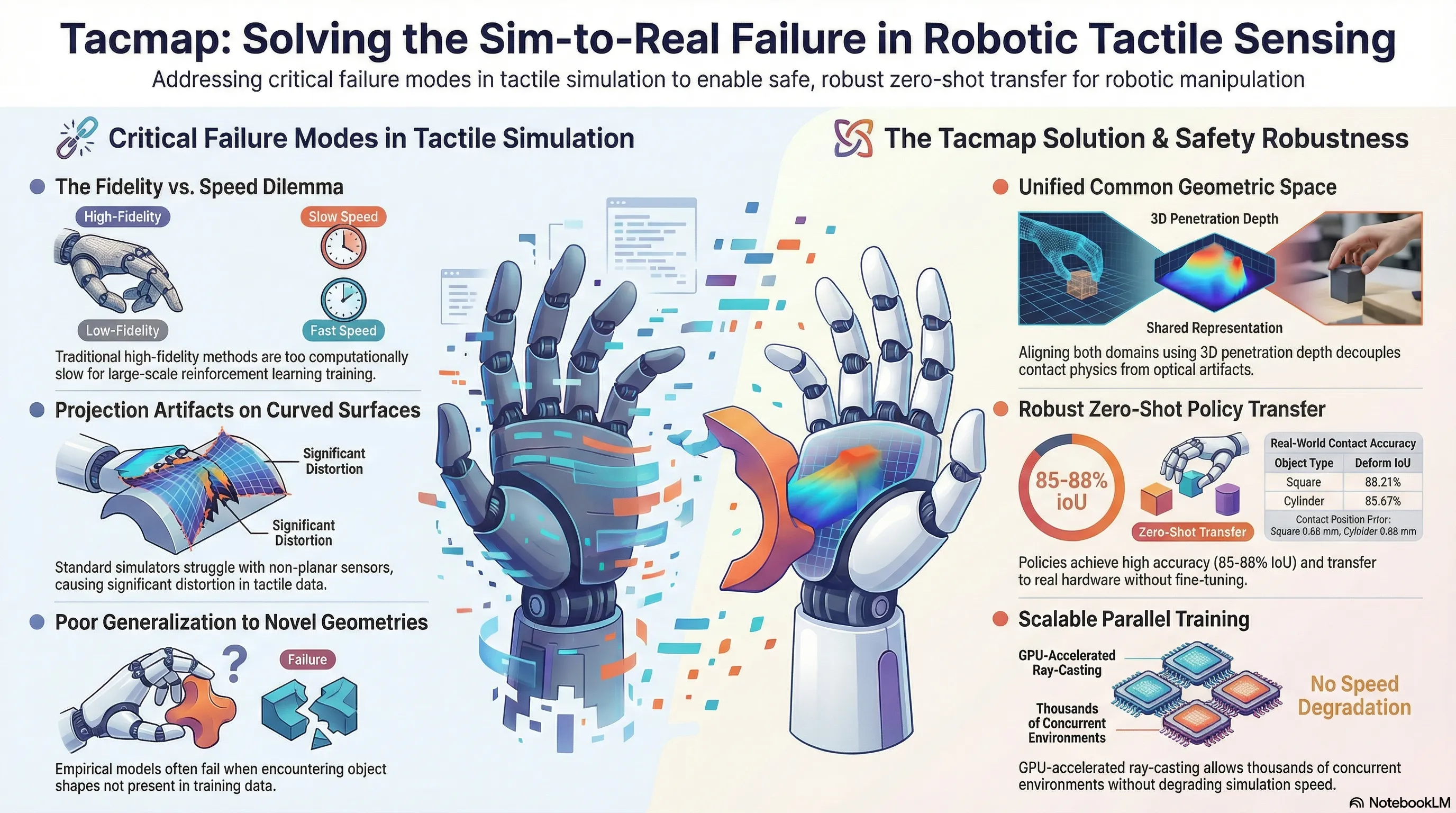

However, a fundamental “Tactile Sim-to-Real Gap” persists. While reinforcement learning (RL) requires millions of samples—making simulation an absolute necessity—policies trained on synthetic tactile data often fail catastrophically in the physical world. This failure is rarely due to control logic; it is a systemic failure of representation. Simulators struggle to reconcile the non-linear optical properties of elastomer membranes (light scattering, internal reflections) with the underlying physics of contact, leading to policies that are “blinded” by the domain shift when deployed. Tacmap bridges this gap by introducing a “shared geometric language”—unifying simulation and reality through a domain-invariant representation of volumetric penetration depth.

The Failure of Current Simulations: A Persistence of Error

Existing tactile simulation methodologies present a trilemma between physical authenticity, computational throughput, and generalization. For AI safety researchers, this gap creates a dangerous environment for reward hacking, where agents learn to exploit visual artifacts of the simulation—such as specific lighting or shading gradients—rather than internalizing the true physics of contact.

The research identifies three critical failure modes in current simulation categories:

- Empirical Methods (e.g., Taxim): These use data-driven models to mimic sensor appearance. While photorealistic, they lack structural congruence when encountering novel object geometries, leading to brittle policies that fail on out-of-distribution (OOD) shapes.

- Analytical Methods (e.g., TACTO): Relying on standard rasterization, these methods provide high speed but utilize simplified depth-buffer mapping. They fail to capture the volume-preserving characteristics of the elastomer, creating a massive reality gap in contact-rich tasks.

- Physics-based Methods (e.g., FEM): These model soft-body dynamics with high fidelity. However, they are computationally prohibitive for large-scale RL, making them unsuitable for the vectorized pipelines required by modern agents.

The Core Innovation: Volumetric Penetration Depth

The key insight of Tacmap is the use of volumetric penetration depth as a universal proxy for contact physics. By abstracting tactile feedback into a “Common Geometric Space,” Tacmap decouples the underlying contact mechanics from sensor-specific optical artifacts.

To achieve this, Tacmap utilizes a generalized normal-projection space. Unlike previous simulators designed for flat surfaces, Tacmap is geometry-agnostic. By casting rays along the surface normals of the elastomer, the framework eliminates the projection distortions and artifacts that typically plague curved sensor simulations. This allows for seamless support of anthropomorphic, curved fingertips, which are essential for complex manipulation.

How Tacmap Works: Structural Congruence Across Domains

Tacmap ensures consistency by applying identical geometric projection logic to both virtual and physical data streams.

In Simulation: The Ray-Casting Pipeline

The system defines the sensor geometry using two boundaries: the undeformed sensor surface () and a virtual sensing surface () at a fixed exterior offset. GPU-accelerated rays are cast from toward the sensor interior along the surface normal. The deformation value is calculated by determining the object’s occupancy between the surfaces: Where is the ray origin, is the undeformed surface coordinate, and is the first intersection point with the object mesh.

In the Real World: Two Pathways for Perception

To align physical hardware—specifically the SharpaWave hand and its DTC tactile sensors—with the simulation, two distinct ResNet-based models are employed:

- Deform Map Translation: A ResNet-based encoder-decoder architecture is trained to invert the raw, optically complex tactile images into unified geometric “deform maps.”

- Net Force Estimation: A separate ResNet-based regression network maps raw images to net force readings (F), calibrated against a high-precision force sensor on an automated hardware-in-the-loop rig.

Performance Metrics: Quantifying the Geometric Alignment

Quantitative evaluation across diverse contact scenarios proves that Tacmap’s “Common Geometric Space” effectively minimizes the sim-to-real gap.

Tacmap Performance vs. Real-World Ground Truth

| Metric | Object: Square | Object: Cylinder |

|---|---|---|

| Deform IoU (Manifold Alignment) | 88.21% | 85.67% |

| Deform Depth Error (Relative %) | 18.53% | 14.71% |

| Median Force Error | 0.28 N | 0.61 N |

| Contact Position Error | 0.66 mm | 0.96 mm |

Regarding scalability, Tacmap maintains the vectorized efficiency required for modern RL. While total simulation speed is high, GPU memory allocation exhibits a steep climb when scaling the sensor array; experiments show the framework supports 1024 parallel environments with 5 active Tacmap sensors per environment before reaching the limits of consumer-grade VRAM (approx. 38GB).

The Proof: Zero-Shot In-Hand Rotation

The framework’s robustness was validated through an In-Hand Rotation task—a high-dimensional challenge requiring continuous re-orientation of a spherical object. The policy was trained exclusively in simulation using Proximal Policy Optimization (PPO), where it internalized the geometric gradients of the contact manifolds.

In a Zero-Shot Transfer, the policy was deployed on the physical SharpaWave hand without fine-tuning. The agent successfully interpreted the real-time Tacmap stream, perceiving subtle contact transitions and modulating force to prevent slips. This success confirms that the geometric abstractions learned in simulation are structurally congruent with translated real-world tactile signals.

Implications for AI Safety and Red-Teaming

For safety researchers, Tacmap is a critical tool for mitigating “domain shift” failure modes. Tactile-guided manipulation is notoriously prone to adversarial geometries—surface features that would break a standard vision-based policy.

- Robustness Against OOD Geometries: By grounding the agent in consistent geometric depth rather than raw pixels, safety researchers can “red-team” policies with adversarial object shapes that would typically trigger systematic failures in visually-reliant models.

- Mitigating Brittle Policies: Tacmap prevents the agent from developing dependencies on simulation-specific lighting, ensuring the policy is anchored in the invariant physics of volumetric interpenetration.

Discussion of Limitations & Co-Design Opportunities: The current iteration focuses on normal penetration depth and does not yet explicitly model tangential force distribution or shear strain. This represents a significant co-design opportunity for future work: iterating on hardware-sensor configurations and the Tacmap framework simultaneously to incorporate multi-dimensional force fields. Furthermore, as mesh complexity increases, future research into advanced acceleration structures will be required to maintain massive parallel throughput.

Key Takeaways

- Domain-Invariant Bridge: Volumetric penetration depth serves as a unified geometric representation that circumvents the need for complex optical modeling.

- Vectorized Efficiency: GPU-accelerated ray-casting along surface normals allows for high-fidelity tactile rendering at the speeds required for massive parallel RL.

- Proven Robustness: High IoU (up to 88.21%) and low force error facilitate zero-shot transfer for complex, contact-rich manipulation tasks, moving us closer to reliable, tactile-aware autonomous agents.

Read the full paper on arXiv · PDF