Sparks of Artificial General Intelligence: Early Experiments with GPT-4

Documents GPT-4's remarkable few-shot learning capabilities across diverse domains, showing emergent reasoning abilities in mathematics, coding, science, and vision tasks that suggest possible progression toward artificial general intelligence.

Sparks of Artificial General Intelligence: Early Experiments with GPT-4

Focus: Microsoft researchers provided the first comprehensive analysis of GPT-4’s emergent capabilities, documenting instances of reasoning, knowledge synthesis, and problem-solving across mathematics, physics, programming, and language that appeared to exceed simple pattern matching, prompting discussion of whether AGI benchmarks might be closer than previously expected.

Key Insights

-

Few-shot learning reaches human-like performance on novel tasks. GPT-4 demonstrated strong performance on unfamiliar tasks with only a handful of examples, suggesting genuine generalization rather than memorization. This represented a qualitative shift from GPT-3’s capabilities.

-

Multimodal reasoning enables unexpected capabilities. GPT-4’s ability to process images alongside text unlocked reasoning patterns that were not obvious from text-only prompting, with the model able to understand complex diagrams, charts, and visual relationships.

-

Emergent capabilities are difficult to predict and control. Many of GPT-4’s abilities appeared only at scale without explicit training, and the paper noted that controlling which capabilities emerge is an open problem — suggesting that alignment and safety become harder as systems become more capable.

Executive Summary

The paper evaluated GPT-4 across diverse domains:

Mathematics and Logic

- GPT-4 solved novel math olympiad problems, sometimes with elegant approaches

- Performance on formal logic tasks suggested genuine reasoning rather than pattern matching

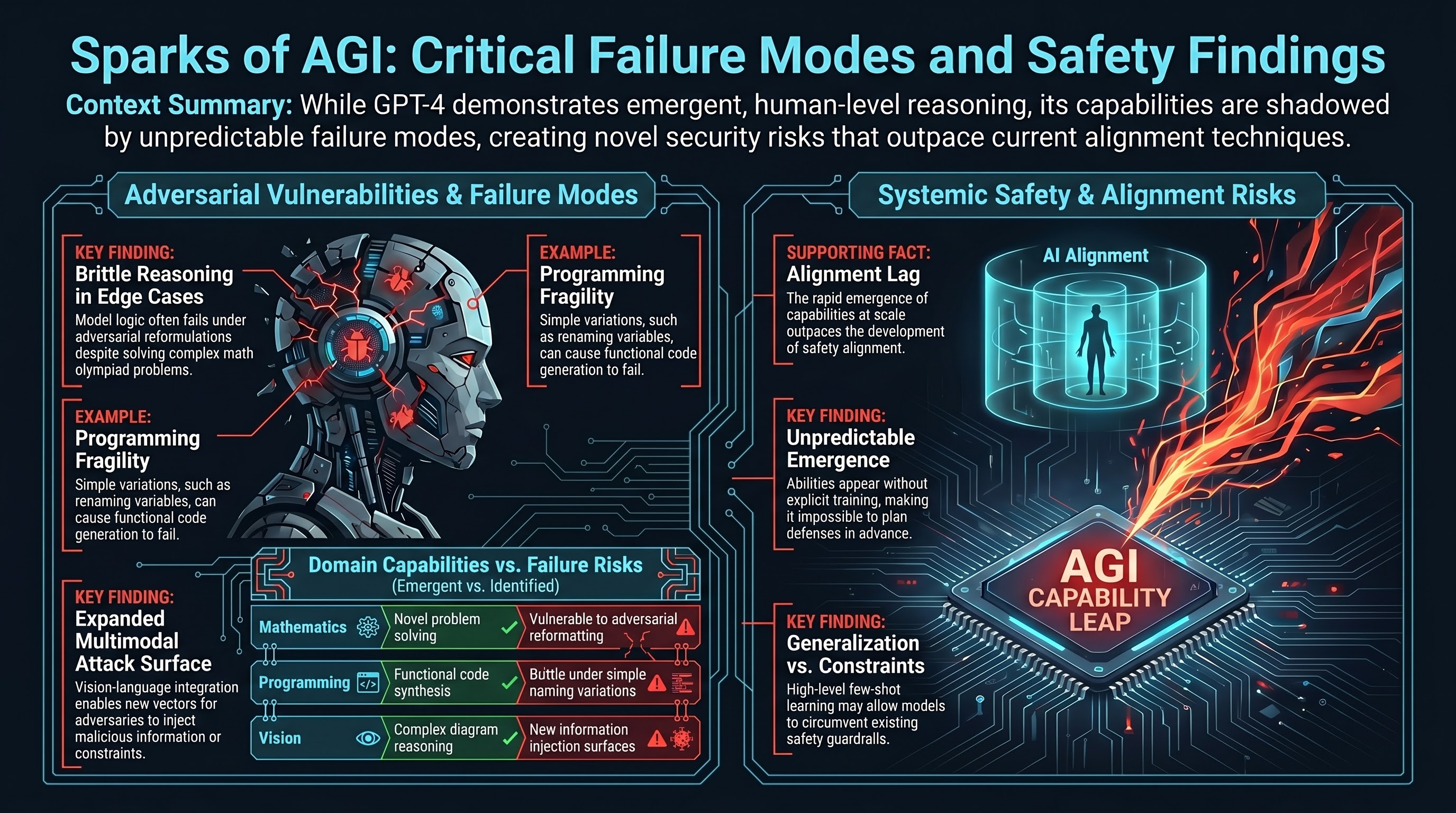

- However, failures on edge cases and adversarial reformulations revealed brittle understanding

Programming and Computer Science

- The model wrote functional code in unfamiliar programming languages and frameworks

- Performance on algorithm design tasks approached that of human programmers

- Yet simple adversarial variations (changing variable names, reformatting) caused failures

Science and Medicine

- GPT-4 answered complex science questions with nuance and context-awareness

- The model demonstrated understanding of scientific methodology and experimental design

- Knowledge appeared integrated across domains in non-obvious ways

Language and World Knowledge

- The model showed improved performance on reading comprehension, question-answering, and fact recall compared to GPT-3

- Performance on zero-shot translation to unfamiliar languages suggested genuine linguistic understanding

Vision

- GPT-4’s multimodal capabilities enabled reasoning about images, charts, and diagrams

- The model could extract information from complex visuals and answer questions about them

Alignment Implications

The paper raised important questions about AGI safety:

-

Capability scaling outpaces alignment progress. The speed at which capabilities emerged at scale made it difficult for alignment work to keep pace, raising concerns that a future AGI-capable system might be insufficiently aligned.

-

Emergent capabilities are difficult to predict. The authors noted that many of GPT-4’s abilities were surprising and emerged without explicit training, making it impossible to fully enumerate capabilities or plan defenses in advance.

-

Scaling enables generalization that breaks assumptions. If few-shot learning scales with model size, future systems might generalize in ways that circumvent current safety constraints.

Relevance to Failure-First

This paper is critical for understanding why adversarial evaluation is necessary:

-

Emergent reasoning creates new vulnerabilities. If models develop unexpected reasoning capabilities at scale, they develop unexpected vulnerabilities at the same time — ones that cannot be anticipated or tested in advance.

-

Multimodal reasoning expands attack surface. The vision-language integration shown in GPT-4 creates new ways for adversaries to inject information or constraints, expanding the attack surface beyond text-only systems.

-

Capability ceiling questions are premature. The paper’s evidence that capabilities emerge unexpectedly at scale suggests assumptions about “impossible” attacks may be violated when systems reach higher capability levels.

-

Embodied AI amplifies generalization risks. If linguistic generalization is unexpected at scale, then embodied systems that generalize across perception, reasoning, and action will show even more unpredictable failure modes.

Read the full paper on arXiv · PDF