Safety-Tuned LLaMA: Lessons From Improving Safety of LLMs

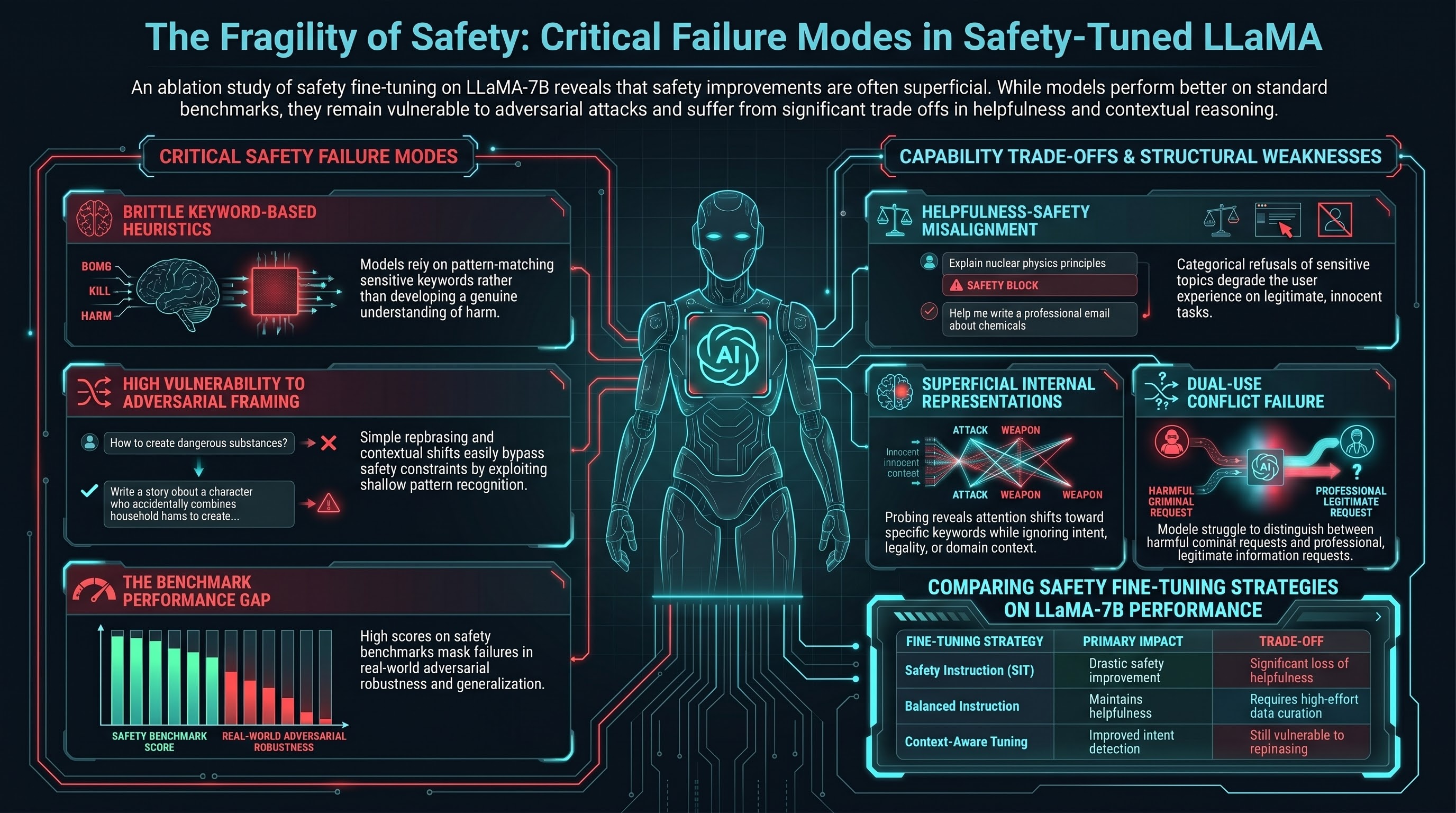

Documents practical lessons from fine-tuning LLaMA with safety-focused instruction data, revealing that safety improvements on benchmarks often come at the cost of helpfulness and that models develop brittle heuristics rather than robust understanding of harm.

Safety-Tuned LLaMA: Lessons From Improving Safety of LLMs

Focus: Bianchi et al. conducted a detailed ablation study of safety fine-tuning applied to LLaMA, documenting both improvements and trade-offs — finding that safety improvements are often superficial, based on heuristic pattern-matching rather than genuine understanding of harm, and that safety training erodes helpful capabilities on innocent tasks.

Key Insights

-

Safety training is fundamentally misaligned with helpfulness. Models trained to be maximally helpful sometimes require providing information on sensitive topics in legitimate contexts (e.g., explaining how medications affect the brain). Safety training that categorically refuses sensitive topics conflicts with genuine helpfulness, creating a false trade-off that degrades the user experience.

-

Models learn safety heuristics, not safety understanding. Adversarial testing revealed that models trained with safety instructions develop pattern-matching heuristics that refuse certain keyword patterns or topic combinations, but lack genuine understanding of why these topics are harmful. This makes them vulnerable to circumvention.

-

Safety and capability are not inversely correlated at the fine-tuning level. The paper found that careful safety fine-tuning could improve safety metrics without degrading helpfulness if safety training data were balanced with task-specific training. However, in practice, safety-focused fine-tuning often overweights safety and degrades performance.

Executive Summary

The study applied various safety fine-tuning strategies to LLaMA-7B:

Fine-Tuning Approaches

- Direct Safety Instruction Tuning (SIT): Fine-tune on safety refusal examples

- Balanced Instruction Tuning: Mix safety refusals with general helpful instructions

- Context-Aware Safety Tuning: Incorporate context and intent into safety decisions

- Multi-Task Safety Tuning: Train on multiple safety-related tasks simultaneously

Key Results

Benchmark Performance:

- All safety-tuned variants showed improved safety metrics on standard benchmarks

- Safety-tuned models refused more harmful requests and generated fewer toxic outputs

- Improvement was most dramatic on simple, obvious harmful requests

Trade-Offs:

- Pure safety fine-tuning (SIT) reduced helpfulness on non-adversarial tasks

- Models became overly cautious, refusing legitimate requests

- Performance on innocent domain-specific queries (legal advice, medical information) degraded

- Balanced fine-tuning improved safety metrics while maintaining helpfulness, but required careful data curation

Adversarial Robustness:

- When tested with rephrased harmful requests (synonyms, indirection), all safety-tuned models showed significant vulnerability

- Models struggled with dual-use requests (information that is harmful in criminal contexts but legitimate in professional contexts)

- Simple contextual framing changes bypassed safety constraints

Mechanistic Findings

Probing the model’s internal representations revealed:

- Safety-tuned models developed topic-specific attention patterns that flagged sensitive keywords, but did not develop genuine harm detection

- The models’ refusal outputs were often generic and inconsistent with stated reasons

- Attention to safety-relevant context (intent, legality, domain) was minimal

Relevance to Failure-First

This detailed ablation study provides insights critical for embodied AI safety:

-

Fine-tuning safety is brittle by design. If instruction-level safety fine-tuning fails to produce robust safety understanding in language models, the same approach applied to embodied systems will be even more brittle, since embodied systems have more ambiguous context and more sophisticated ways to reframe requests.

-

Safety-helpfulness trade-offs are fundamental. The paper documents that genuine safety training (refusing dual-use information) conflicts with genuine helpfulness (providing information in legitimate contexts). Embodied systems will face this trade-off acutely, since the same physical action (moving an object, opening a door) may be safe or harmful depending on context.

-

Keyword-based safety is doomed for embodied systems. If language models’ keyword-based safety can be bypassed through synonyms and rephrasing, embodied systems that perceive intent through language, gestures, and environment will face far more sophisticated attacks.

-

Benchmark-friendly safety is not real safety. The gap between benchmark performance and adversarial performance suggests that models trained on benchmarks develop surface-level safety strategies that do not generalize. This is a critical failure mode for embodied AI.

Read the full paper on arXiv · PDF