GameplayQA: A Benchmarking Framework for Decision-Dense POV-Synced Multi-Video Understanding of 3D Virtual Agents

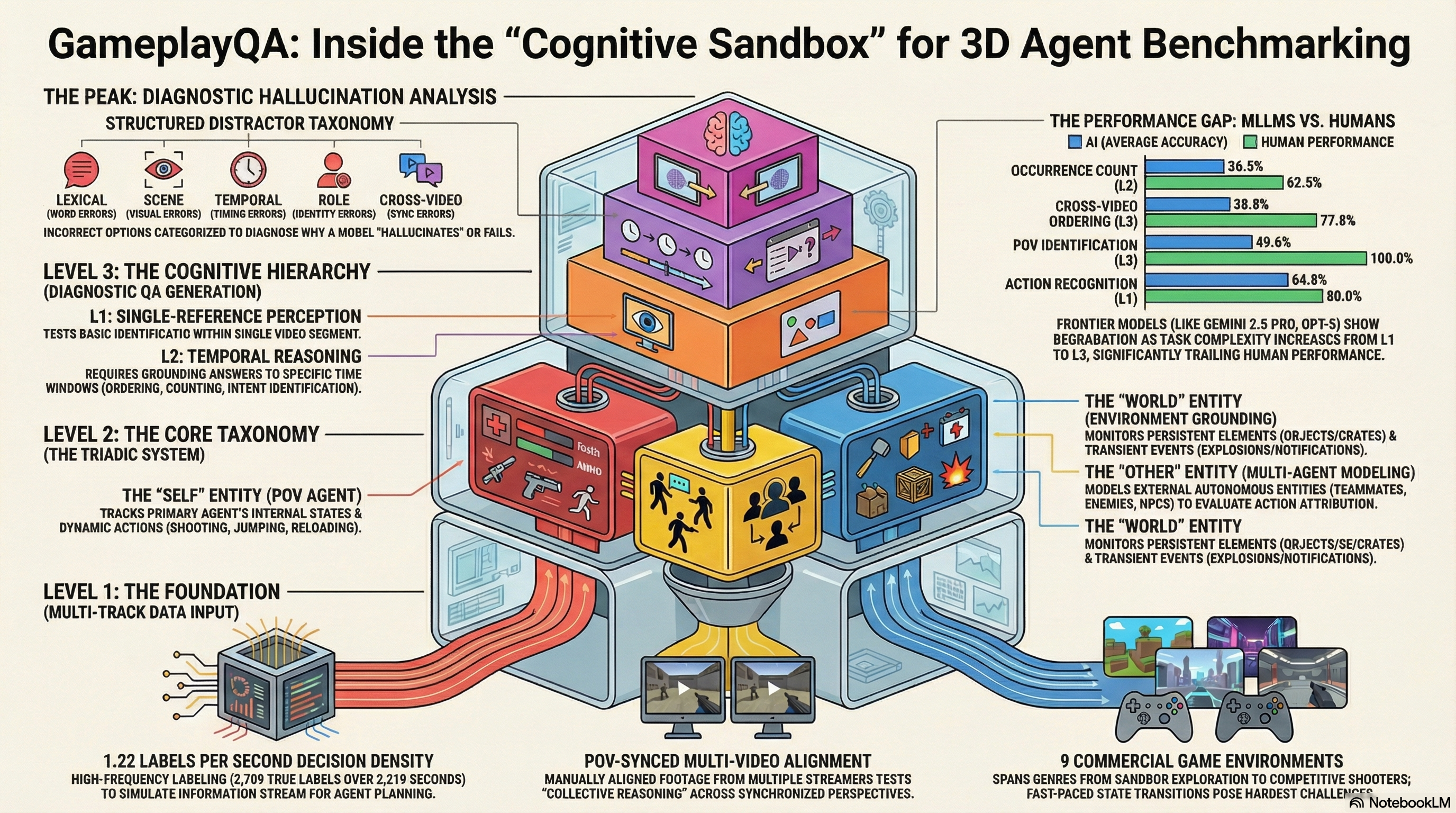

Introduces GameplayQA, a densely annotated benchmark for evaluating multimodal LLMs on first-person multi-agent perception and reasoning in 3D gameplay videos, with diagnostic QA pairs and structured failure analysis.

GameplayQA: A Benchmarking Framework for Decision-Dense POV-Synced Multi-Video Understanding of 3D Virtual Agents

1. Introduction: The Perceptual Frontier of 3D Agents

The field of Multimodal Large Language Models (MLLMs) is undergoing a paradigm shift. We are transitioning from passive observers—models that describe static scenes—to active “perceptual backbones” for autonomous agents. Whether deployed in high-fidelity virtual worlds or physical robotics, these models now serve as the primary sensory-to-cognitive interface for decision-making agents.

In these goal-directed 3D environments, reliable performance requires what we define as agentic perception. This transcends simple object recognition and necessitates three core capabilities:

- Dense State-Action Tracking: The ability to capture rapid transitions in the agent’s own internal states and intentional actions.

- Other-Agent Modeling: Reasoning about the behaviors, conditions, and underlying intentions of external autonomous entities (teammates, adversaries, or NPCs).

- Environment Grounding: Precisely tracking persistent world elements and transient, high-frequency environmental events.

Current benchmarks are failing us. They lack the diagnostic granularity to identify why an agent fails in the field. To bridge this gap, we introduce GameplayQA, a framework designed to stress-test the cognitive foundations of agency and expose the specific perceptual bottlenecks of frontier MLLMs.

2. Why Current Benchmarks Fall Short

Existing video understanding benchmarks are fundamentally inadequate for the demands of embodied AI. Our research identifies three primary deficiencies:

- Lack of Embodiment and Agency Grounding: Most datasets rely on slow-paced, passive footage. They lack the high-frequency state transitions and dense decision-making loops required to test a model’s understanding of intentional action.

- Lack of Hallucination-Diagnosability: Traditional benchmarks offer monolithic accuracy scores. They cannot distinguish if a failure stems from a temporal misinterpretation, a visual fabrication, or a role-attribution error.

- Lack of Multi-Video Understanding: Embodied perception often requires fusion from multiple perspectives (e.g., surround-view cameras in robotics). Current protocols focus almost exclusively on single-viewpoint observation.

3. GameplayQA: The High-Density “Cognitive Sandbox”

GameplayQA is an end-to-end diagnostic pipeline that utilizes 9 commercial games—including Minecraft, Counter-Strike 2, Battlefield 6, and Apex Legends—as a rigorous testing ground. This framework utilizes a metric we call Decision Density (), defined as the temporal frequency of semantic labels required for an agent’s planning and reaction loop:

In our benchmark, 2,709 true labels are annotated across 2,219.41 seconds of footage, yielding a high-frequency density of labels/second. This forces models to process information at the speed of intentional action, far exceeding the pace of passive video datasets.

To structure this information, GameplayQA utilizes the Triadic Entity System, mapping perception across three critical domains:

| Entity Category | Target Captures (Acronyms) | Relevance to Agentic Perception |

|---|---|---|

| Self | Self-Action (SA), Self-State (SS) | Tracks the POV agent’s actions and internal conditions (health, inventory). |

| Other | Other-Action (OA), Other-State (OS) | Models behaviors and statuses of external agents for multi-agent safety. |

| World | World-Object (WO), World-Event (WE) | Grounds perception in static environment items and transient dynamic events. |

4. The Three Levels of Cognitive Complexity

We stratify task difficulty into a three-level hierarchy to isolate specific reasoning failures:

- Level 1 (Single Reference): Basic Perception Tests the fundamental recognition of actions, states, objects, and events within a single video segment.

- Level 2 (Temporal Reasoning): Grounding and Intent Requires models to ground answers within specific time windows. This includes time localization and Intent Identification. Notably, intent is the most subjective and “defensible” category, serving as a unique diagnostic signal for whether a model understands the why behind an agent’s behavior.

- Level 3 (Cross-Video Understanding): Multi-POV Reasoning The peak of complexity, requiring reasoning across synchronized footage. Tasks include sync-referring and POV identification (e.g., attributing an action to the correct viewpoint).

5. A Taxonomy of Failure: Diagnosing Hallucinations

To move beyond surface-level metrics, we employ a Structured Distractor Taxonomy. This enables researchers to pinpoint the “hallucination profile” of a model:

- Lexical Distractors: Text-based variants (e.g., changing the subject or using antonyms).

- Scene Distractors: Vision-based options that list plausible but non-existent objects or events.

- Temporal Distractors: References to events that occurred, but outside the queried time window.

- Role Distractors: Swapping agent attribution (e.g., misattributing an enemy’s action to the POV player).

- Cross-Video Distractors: References to events occurring in a different synchronized video stream.

6. Benchmarking the Giants: Human vs. Frontier MLLMs

We evaluated 16 frontier models, including GPT-5, Gemini 3 Flash, and Qwen3 VL. While Gemini-3-Pro was utilized as a high-fidelity label generator, Gemini 2.5 Pro emerged as the top evaluator model. Despite its strength, a massive “Human-to-Model Gap” remains: humans achieved 80.5% aggregate accuracy, while Gemini 2.5 Pro reached only 71.3%.

The data reveals three fatal failure modes:

- Temporal and Cross-Video Grounding: Models struggle significantly with “Occurrence Count” (36.5%) and “Cross-Video Ordering” (38.8%). The low score in event counting demonstrates a fundamental failure in sustained temporal attention over long horizons—a critical limitation of current transformer-based video processing.

- The Attribution Gap: There is a pronounced 8% performance drop when models track “Other Agents” versus “World Objects.” This “Other Agent Attribution” failure is safety-critical; models frequently misattribute actions between the player and external entities.

- Decision Density Sensitivity: Accuracy correlates inversely with pace. Fast-paced, decision-dense titles like Counter-Strike 2, Battlefield 6, and Apex Legends consistently yield the highest error rates, proving that high-speed environmental changes overwhelm current attention mechanisms.

7. Generalization and Sequential Logic

To confirm these findings are not artifacts of gaming data, we applied our pipeline to real-world datasets: Nexar (dashcam collisions) and Ego-Humans (Lego assembly). Even with a lower decision density (), the relative difficulty ordering remained identical. This confirms that the cognitive hierarchy defined in GameplayQA is a universal law of MLLM failure.

Furthermore, our Temporal Ablation studies (specifically the Shuffled Frames condition) revealed that while basic perception (L1) remained relatively stable, performance on L2 and L3 reasoning collapsed. This proves that frontier models are failing specifically at sequential logic and temporal ordering, not just visual recognition.

8. Conclusion: Building Robust Embodied AI

GameplayQA is more than a benchmark; it is a hallucination profile for the next generation of 3D AGIs. It exposes the perceptual bottlenecks that currently prevent MLLMs from serving as reliable backbones for autonomous agents.

Key Takeaways for Practitioners:

- Address Sustained Temporal Attention: Current architectures cannot reliably count or order events over long horizons; this is a primary blocker for planning agents.

- Bridge the Attribution Gap: Models fail to distinguish “Self” from “Other.” Solving this misattribution is non-negotiable for safety-critical multi-agent environments.

- Stress-Test for Density: Evaluation must reflect the decision-making speed of the target environment. If a model fails at , it is not ready for real-time 3D deployment.

- Move Beyond Accuracy: Use structured distractors to diagnose how your model hallucinations. A “Role” error is far more dangerous in a robotics context than a “Lexical” error.

Our goal is to stimulate a new wave of research at the intersection of world modeling and agentic perception. Only by diagnosing these grounding-limited architectures can we build the robust, embodied AI of the future.

Read the full paper on arXiv · PDF