Experimental Evaluation of Security Attacks on Self-Driving Car Platforms

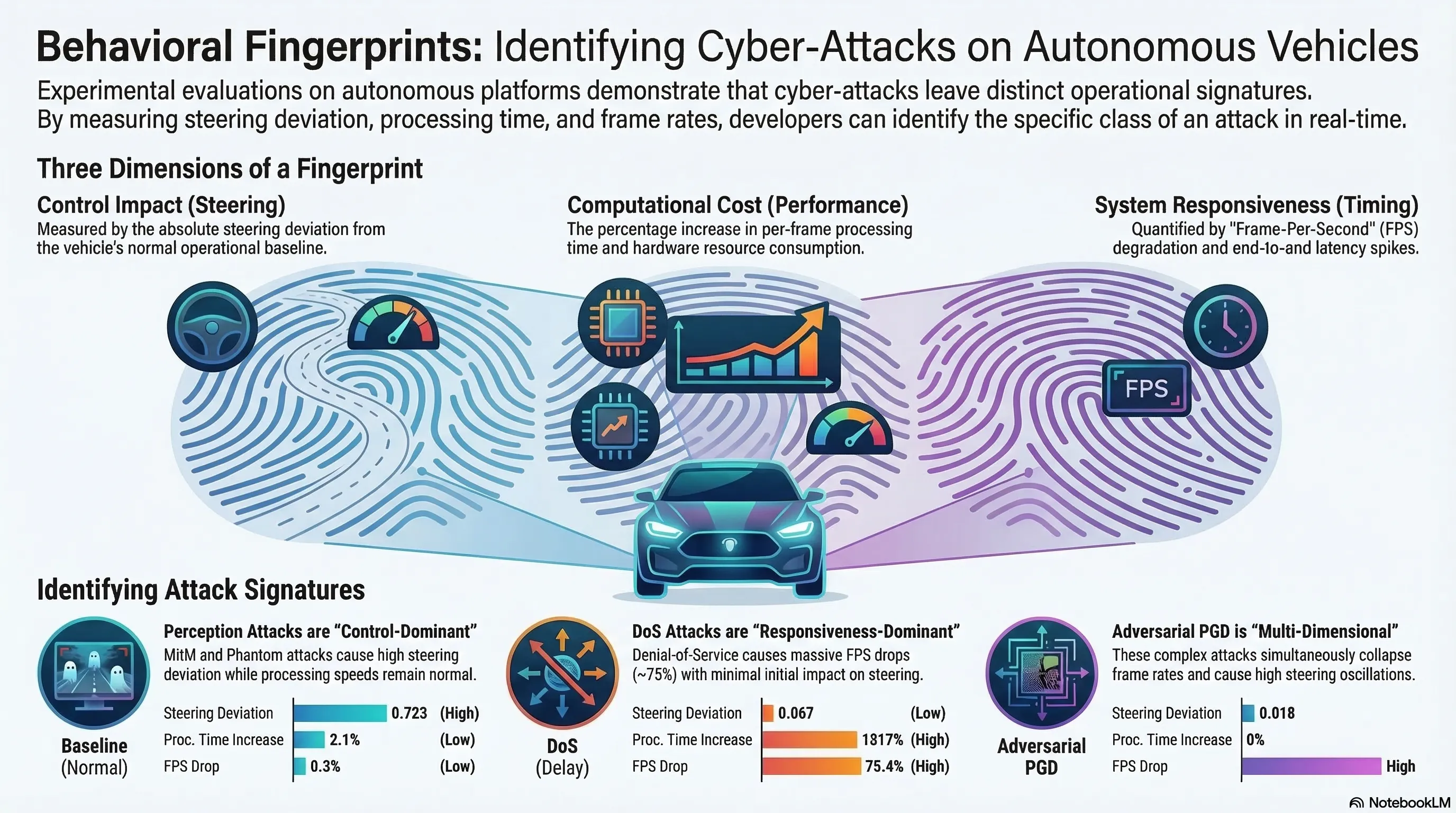

First systematic on-hardware experimental evaluation of five attack classes on low-cost autonomous vehicle platforms, establishing distinct attack fingerprints across control deviation, computational cost, and runtime responsiveness.

Experimental Evaluation of Security Attacks on Self-Driving Car Platforms

1. From Simulation to Hardware

Most autonomous vehicle security research operates in simulation. This paper presents the first systematic on-hardware experimental evaluation of five distinct attack classes on real autonomous vehicle platforms (JetRacer, Yahboom), using a standardized 13-second protocol.

The shift from simulation to hardware matters: physical constraints (latency, sensor noise, actuator limitations) change both attack effectiveness and defense feasibility.

2. Five Attack Classes, Five Fingerprints

Each attack class produces a distinct measurable signature across three dimensions — control deviation, computational cost, and runtime responsiveness:

- MITM (Man-in-the-Middle): intercepts and modifies sensor data, causing high steering deviation with minimal computational overhead

- Phantom attacks: project false features into the environment (e.g., fake lane markings), causing perception-layer confusion

- PGD (Projected Gradient Descent): adversarial perturbations that simultaneously affect steering AND impose computational load

- DoS (Denial of Service): degrades frame rate and responsiveness without directly perturbing the control plane

- Environmental projection: physical-world attacks using projected images or modified road markings

3. Attack-Aware Monitoring

The distinct fingerprints suggest a path toward signature-based defense: different attack types produce different observable patterns in system telemetry. This means a monitoring system could potentially:

- Detect that an attack is occurring

- Classify the attack type based on its signature

- Trigger type-appropriate defensive responses

4. Multi-Layer Attack Surface

The five attack classes operate at fundamentally different layers of the system stack:

- Perception layer: adversarial perturbations, phantom features

- Network layer: MITM, DoS

- Compute layer: resource exhaustion via PGD

This multi-layer attack surface means that no single defense mechanism can address all threats. Security requires a defense-in-depth approach that monitors and protects each layer independently.

5. Implications for Embodied AI Security

The framework generalizes beyond autonomous vehicles to any embodied AI system with sensors, actuators, and network connectivity. The key insight: attacks at different system layers produce qualitatively different effects, and defense strategies must be layer-aware.