Colluding LoRA: A Composite Attack on LLM Safety Alignment

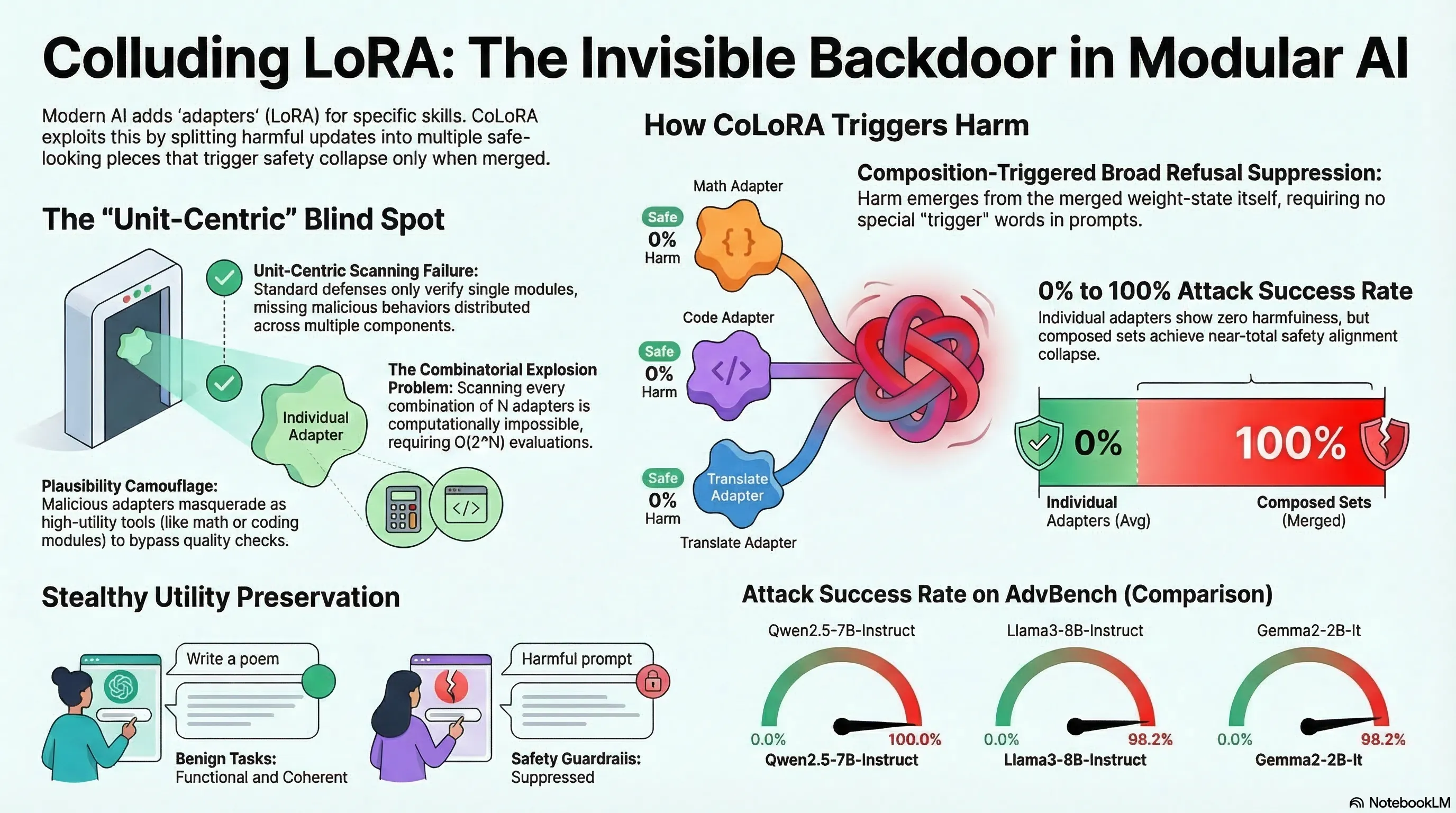

Introduces CoLoRA, a composition-triggered attack where individually benign LoRA adapters compromise safety alignment when combined, exploiting the combinatorial blindness of current adapter verification.

Colluding LoRA: A Composite Attack on LLM Safety Alignment

1. A New Class of Attack: Composition-Triggered

CoLoRA (Colluding LoRA) represents a qualitatively different attack class from prompt injection or adversarial inputs. The attack operates at the model weight level: each LoRA adapter appears benign in isolation, but their linear composition consistently compromises safety alignment. No adversarial prompt or special trigger is needed — just loading the right combination of adapters suppresses refusal broadly.

This is a supply chain attack on model composition, not model inputs.

2. Why Current Defenses Fail

Current adapter verification checks each module individually before deployment. CoLoRA exploits the gap between individual verification and compositional behavior:

- Each adapter passes single-module safety checks

- The harmful behavior only emerges when adapters are combined

- Exhaustively testing all possible adapter combinations is computationally intractable — the combinatorial space grows exponentially with the number of available adapters

This is combinatorial blindness: the defense works at the component level but fails at the system level.

3. The Modular AI Ecosystem as Attack Surface

The modern AI ecosystem is increasingly modular: base models, fine-tuned adapters, retrieval augmentation, tool integrations. Each module may be verified independently, but the composition of verified modules can produce unverified behavior.

CoLoRA demonstrates this principle at the adapter level, but the same logic applies to:

- Plugin ecosystems where individually safe plugins interact unsafely

- Multi-agent systems where individually aligned agents produce misaligned collective behavior

- RAG pipelines where individually benign documents combine to shift model behavior

4. From Mercedes-Benz R&D

This paper comes from Mercedes-Benz Research & Development North America, reflecting growing automotive industry awareness that embodied AI safety is not just an academic concern. As vehicles and robots increasingly rely on modular AI components, compositional attacks become a practical threat.

5. Implications

- Component-level verification is insufficient: safety must be verified at the composition level

- Adapter marketplaces need compositional auditing: checking individual adapters does not prevent CoLoRA-style attacks

- The supply chain is an attack surface: the modularity that enables rapid AI development also enables novel attack vectors that current defenses do not address