A User-driven Design Framework for Robotaxi

Investigates real-world robotaxi user experiences through semi-structured interviews and autoethnographic rides to identify design requirements and propose an end-to-end user-driven design framework.

A User-driven Design Framework for Robotaxi

1. Introduction: Moving Beyond Technical Performance

The paradigm of autonomous vehicle (AV) development has shifted from restricted pilot testing to large-scale commercial saturation. As of November 2025, platforms like Apollo Go have surpassed 17 million completed rides, signaling that technical performance in perception and path planning is rapidly reaching maturity. However, for the AI safety practitioner, a new and more complex frontier has emerged: the “human factor.”

While an autonomous system may technically navigate an intersection with zero legal infractions, the resulting human-robot interaction (HRI) often reveals covert system failures—instances where the car performs safely by engineering standards but fails socially or predictably, eroding passenger trust. This analysis synthesizes real-world user data and autoethnographic research into a design framework that bridges the gap between technical robustness and the psychological safety of the passenger.

2. The Allure of the Autonomous Ride: Agency and Consistency

Market adoption is currently driven by a distinct set of incentives that differentiate the robotaxi experience from traditional ride-hailing.

Primary User Incentives:

- Low Cost: Drastic price advantages facilitated by aggressive promotional subsidies and the removal of labor costs.

- Curiosity: A high-tech novelty factor that attracts early adopters.

- Social Recommendation: peer-vetted trust that mitigates the perceived risk of a driverless system.

Beyond economics, the transition to robotaxis represents a psychological shift from passenger-as-guest to passenger-as-operator. In traditional rides, users often experience “social friction” or a “communicative burden”—the awkwardness of negotiating climate control or music with a human driver (P11, P12). In contrast, the robotaxi offers a “semi-private transition space.” Users cited the “ritual of ownership,” such as the ability to customize the vehicle’s rooftop light color via the app, which creates a sense of exclusivity and personal connection to the machine. This standardization ensures a predictable environment free from the “human intrusion” of driver distractions, odors, or emotional volatility.

3. The Friction Points: Identifying Systematic Failure Modes

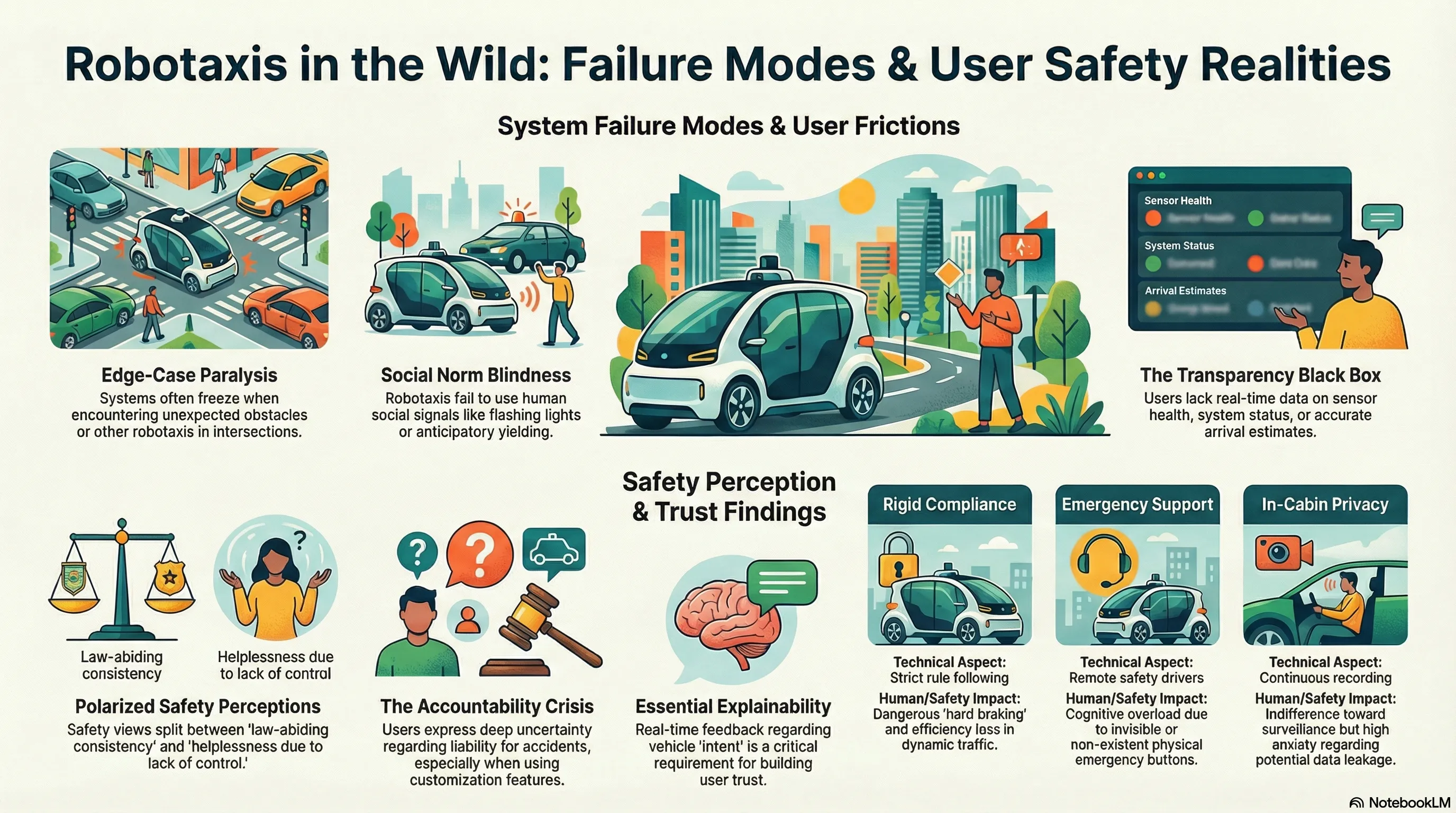

Despite high technical benchmarks, systematic failure modes persist in the interaction layer. These are not just “bugs” but fundamental HRI misalignments:

- Limited Flexibility & The “Invisible Bus Stop”: The system’s overly cautious driving style frequently results in efficiency loss. A critical failure is the “Invisible Bus Stop” phenomenon, where vehicles adhere strictly to GPS coordinates, ignoring passengers only meters away or stopping in hazardous locations (e.g., middle lanes) because the system lacks the situational intuition to “pull forward” for safety.

- Transparency Gaps & Price Discrimination: Users report “Unknown” waiting times during hailing, where no availability data is disclosed. Furthermore, a lack of transparent pricing logic has led to perceptions of “killing loyal customers”—a form of algorithmic price discrimination where fares increase significantly as introductory discounts fade (P06).

- Robustness & Edge-Case Vulnerabilities: Systems struggle with social negotiation. The “robotaxi standoff” occurs when two AVs meet at a turn and remain stationary indefinitely, unable to interpret the other’s intent. A notable safety failure occurred during the “large bag” incident: when encountering a wind-blown bag, the vehicle turned on hazard lights and stopped dead in the middle of a live road, transforming a minor obstacle into a major traffic hazard.

- Emergency Handling UI Failures: There is a dangerous lack of “actionable literacy” in the cabin. Emergency controls are often hidden or poorly visualized. Research identified that safety instructions used “cute,” small fonts that were difficult to read during cognitive overload, and signage was inconsistent (e.g., instructions to pull a handle vs. press a button).

4. The Safety & Ethics Paradox

The perception of safety is polarized between the machine’s lack of error and its lack of intuition.

| Safety as a Benefit | Safety as a Concern |

|---|---|

| Rule-Abiding: Absolute adherence to limits and laws without fatigue. | Diminished Control: Helplessness during “close calls” or abnormal maneuvers. |

| Elimination of Conflict: No risk of harassment, road rage, or social aggression. | Doubts about AI Intuition: Skepticism regarding the AI’s ability to match human “subconscious” reflexes. |

| Machine-Based Monitoring: Perception that cameras are “low-profile” and less intrusive than human eyes. | Designer Expertise Gaps: Uncertainty if the “code writers” possess actual real-world driving experience. |

The Tension of Customization vs. Accountability A significant ethical dilemma arises with “User Customization.” Users expressed a desire for “fast” or “aggressive” modes, yet this creates a moral hazard. If a passenger requests higher speeds and an accident occurs, the liability framework becomes blurred (P18). Is the passenger an operator or a consumer? This ambiguity necessitates a clear legal demarcation between user-driven parameters and system-level constraints.

5. The End-to-End User-Driven Design Framework

To resolve these paradoxes, we propose a framework centered on the four stages of the journey:

Hailing: Disclosure and Preparation

- Pre-ride Configuration: Enable waiting-phase customization of climate and lighting to establish a sense of agency before entry.

- System Disclosure: Provide a “Vehicle Health Check” (battery levels, tire pressure, and sensor status) to build pre-trip confidence.

Pick-up: Context-Aware Facilitation

- Dynamic Localization: Use Bluetooth and Visual ID fusion to identify the passenger’s exact position, preventing the “passenger-chasing-the-car” failure.

- Automated Accessibility: Implement sensor-triggered trunk opening for luggage and multi-modal alerts for visually impaired users.

Traveling: Explainability and Alignment

- Action-Rationale Explainability: The system must broadcast situational rationales for counterintuitive behaviors (e.g., “Braking for obstacle ahead” or “Detouring for construction”) to prevent passenger panic.

- Driving Norm Alignment: Integrate culturally shared social signals, such as “thank you” light flashes and anticipatory braking, to make the vehicle a “predictable participant” in traffic.

Drop-off: Accountable Feedback

- Feedback Triage: Move away from generic star ratings. Implement granular feedback where users “evaluate the code” (P17). Ratings should distinguish between the “data-processing model” and “physical vehicle maintenance” to ensure insights reach the relevant engineering teams.

6. Future Directions: The Robotaxi as a “Transition Space”

As the technology matures, the robotaxi will move beyond a tool for movement to a “semi-private transition space.”

- Account-bound AI Companions: Users envision personalized virtual assistants that follow their account, not the vehicle. This “DeepSeek-style” personalization allows the AI to adapt its tone (tourist guide vs. child-friendly mentor) across different cars.

- Chartered Mobile Personal Spaces: Expectations for “chartered” models where a vehicle serves as a mobile base for an entire day, or a parked “rest space” where users can pay for a period of stationary solitude.

7. Conclusion & Key Takeaways for AI Safety Practitioners

Technical excellence in Reinforcement Learning from Human Feedback (RLHF) and world models is insufficient if the HRI layer remains opaque. A critical risk for safety developers is Reward Model Pollution: if users provide generic 5-star ratings for rides that felt “uncanny” or “socially misaligned” but were technically “safe,” the RLHF loop may over-optimize for safety at the total expense of social predictability, leading to the very rigid behaviors that frustrate users today.

Executive Summary Checklist for Developers:

- Implement Action-Rationale Explainability: Replace repetitive “traffic is complex” voice prompts with specific situational data for all counterintuitive maneuvers.

- Mandate Non-Networked Overrides: Ensure the cabin features physical, non-software-dependent buttons for emergency stops and rescue communication.

- Incorporate Social Driving Etiquette: Algorithms must move beyond rule compliance to include “driving etiquette” (social signaling) to ensure predictable integration into the traffic ecosystem.

- Standardize Feedback Accountability: Create a transparent chain of custody for user feedback, ensuring passengers know when their “evaluation of the code” results in a system update.

- Clarify Customization Liability: Define hard-coded safety limits that cannot be overridden by user “fast mode” requests, maintaining platform-level accountability.

Read the full paper on arXiv · PDF