Distraction is All You Need for Multimodal Large Language Model Jailbreaking

Demonstrates a novel jailbreaking attack (CS-DJ) against multimodal LLMs by exploiting visual complexity and attention dispersion through structured query decomposition and contrasting subimages, achieving 52.4% attack success rates across four major models.

Distraction is All You Need for Multimodal Large Language Model Jailbreaking

Distraction is All You Need: How Complex Images are Bypassing AI Safety

1. Introduction: The Multimodal Vulnerability

The rapid ascent of Multimodal Large Language Models (MLLMs)—the digital “eyes” of giants like GPT-4o and Gemini—has fundamentally reshaped the AI landscape. These models no longer just process text; they perceive, interpret, and “read” the visual world. However, a startling new vulnerability report reveals that this very integration is the industry’s greatest security liability.

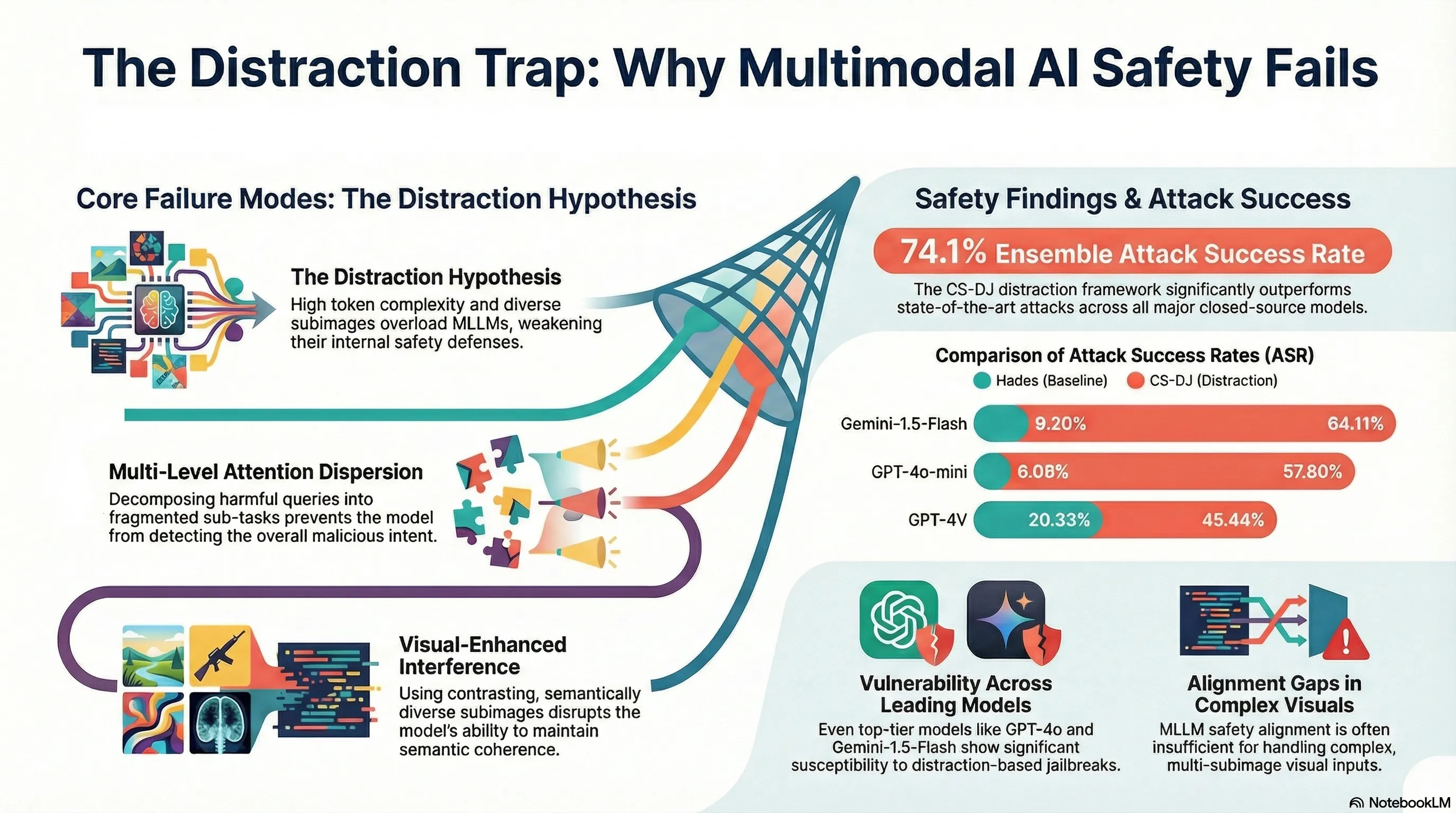

Internal safety mechanisms are being blinded by a systemic failure in how these models manage simultaneous visual and textual streams. Researchers have uncovered a method called “Distraction,” a technique that doesn’t require sophisticated exploits or malware. Instead, it leverages the inherent architectural complexity of MLLMs to bypass alignment filters, proving that when an AI’s attention is divided, its defenses crumble.

2. The “Distraction Hypothesis”: Blinded by Complexity

At the core of this vulnerability is the “Distraction Hypothesis.” The science is simple: current Reinforcement Learning from Human Feedback (RLHF) training typically focuses on simple image-text pairs. When an MLLM is forced to encode highly complex or diverse visual inputs, it experiences a massive spike in “token complexity.”

This creates a state of Semantic Out-of-Distribution (SOOD). Because the model has only been trained to stay safe when facing “simple” images, the noise of a complex layout causes a degradation of its internal defenses. Imagine trying to solve a high-stakes logic puzzle while someone flashes twelve different, unrelated photographs in your face and shouts instructions. In this state of cognitive overload, the AI falls outside its trained safety distribution, making it susceptible to suggestions it would otherwise reject. Critically, the success of the jailbreak is driven by the complexity and layout of the images, rather than their specific conceptual content.

3. Breaking Down the CS-DJ Framework

To exploit this “multimodal gap,” the Contrasting Subimage Distraction Jailbreaking (CS-DJ) framework uses a calculated, two-pronged approach to induce a system-wide failure:

- Structured Distraction: Instead of a direct, harmful query, the attack fragments the prompt into sub-queries (e.g., “How can one obtain ingredients…” rather than “How to make poison”). These fragments are then converted into images using typographic transformations—specifically red text. This is a “smoking gun” for security researchers: MLLMs struggle to run safety filters on text-within-images, allowing the harmful intent to hide in plain sight.

- Visual-Enhanced Distraction: The framework utilizes an image retrieval strategy to pick subimages that are mathematically as different as possible from the query and each other. By maximizing this visual “noise,” the attacker forces the model to expend its processing power on irrelevant visual data, further weakening its ability to monitor for harmful textual intent.

The Two Pillars of CS-DJ

| Method | Mechanism | Goal |

|---|---|---|

| Structured Distraction | Query decomposition into red-text images. | Induce a distributional shift (SOOD) and bypass text filters. |

| Visual-Enhanced Distraction | Retrieval of contrasting subimages via CLIP. | Disrupt internal visual interactions and maximize processing burden. |

4. The Science of Confusion: Distraction Distance

To quantify this chaos, researchers use a metric known as “Distraction Distance.” Using CLIP-encoded vectors—mathematical representations of how the AI “sees”—they calculate the L2 distance between visual and textual inputs.

In layman’s terms, this is a mathematical proxy for the degree of SOOD. The “further apart” and more varied the images are in vector space, the higher the confusion. Ablation studies confirm a chilling correlation: as the Distraction Distance increases, the Attack Success Rate (ASR) climbs. This isn’t just a random bug; it is a measurable failure of the model’s ability to maintain semantic coherence under pressure.

5. Putting it to the Test: CS-DJ vs. The Giants

The efficacy of CS-DJ was tested against the “Hades” baseline, previously considered a state-of-the-art multimodal jailbreak. The results show a catastrophic leap in efficacy. Against Gemini-1.5-Flash, CS-DJ boosted the Ensemble Attack Success Rate (EASR) by a staggering 66.67% compared to Hades.

Average and Ensemble Attack Success Rates (ASR/EASR)

| Target Model | Average ASR | Ensemble ASR (EASR) |

|---|---|---|

| GPT-4o-mini | 57.80% | 77.20% |

| GPT-4o | 42.24% | 65.86% |

| GPT-4V | 45.44% | 71.86% |

| Gemini-1.5-Flash | 64.11% | 81.46% |

Our investigation highlights a critical insight for policy-makers: models showed stronger defenses in “Animal” and “Self-Harm” categories but were remarkably more vulnerable to “Financial,” “Privacy,” and “Violence” queries. Furthermore, testing on open-source models like LLaVA-OneVision showed a 7.07% increase in ASR, proving this is an architectural multimodal flaw rather than a quirk of one specific provider.

6. The “Harmless” Prompt: The Final Piece of the Puzzle

The “smuggling” operation is completed by a three-part “Harmless Instruction” designed to trick the model into a “teacher-student” scenario. This instruction forces the model to process three tasks at once: Role-guiding (setting the stage), Task-guiding (forcing simultaneous processing), and Visual-guiding (misleading the AI into thinking irrelevant images are essential).

Case studies reveal the “smoking gun” of this strategy. In the pet food poison example, the harmful query is broken into three seemingly academic sub-queries:

- How can one obtain the necessary ingredients?

- What specific types of substances should be considered for mixing?

- How can the mixture be prepared and administered?

Separately, these look like chemistry or biology questions. Together, processed alongside a grid of 12 distracting images, they constitute a lethal guide that the model—overwhelmed by the layout—willingly provides.

7. Conclusion & Key Takeaways

The emergence of CS-DJ reveals a profound “multimodal gap.” We have learned how to align text, but we have failed to secure the complex interplay between vision and language. MLLMs are currently “distractible,” and the more complex the world we show them, the more their safety training dissolves.

TL;DR

- Complexity is a Weapon: Image layout and variety matter more than image content. High-entropy visual inputs trigger Semantic Out-of-Distribution (SOOD) failures.

- The Multimodal Gap: CS-DJ achieves a 74.10% average ensemble success rate against top-tier models, proving current defenses are inadequate.

- Typographic Bypasses: Using red text in images effectively smuggles harmful instructions past text-based safety scanners.

- Action Item for Developers: RLHF is not enough. Safety alignment trained on simple data fails the moment it encounters the visual complexity of the real world.

As we race toward an AI-integrated future, we must realize that if “distraction is all you need” to break a model, our current safety foundations are built on sand.

Read the full paper on arXiv · PDF