WildTeaming at Scale: From In-the-Wild Jailbreaks to (Adversarially) Safer Language Models

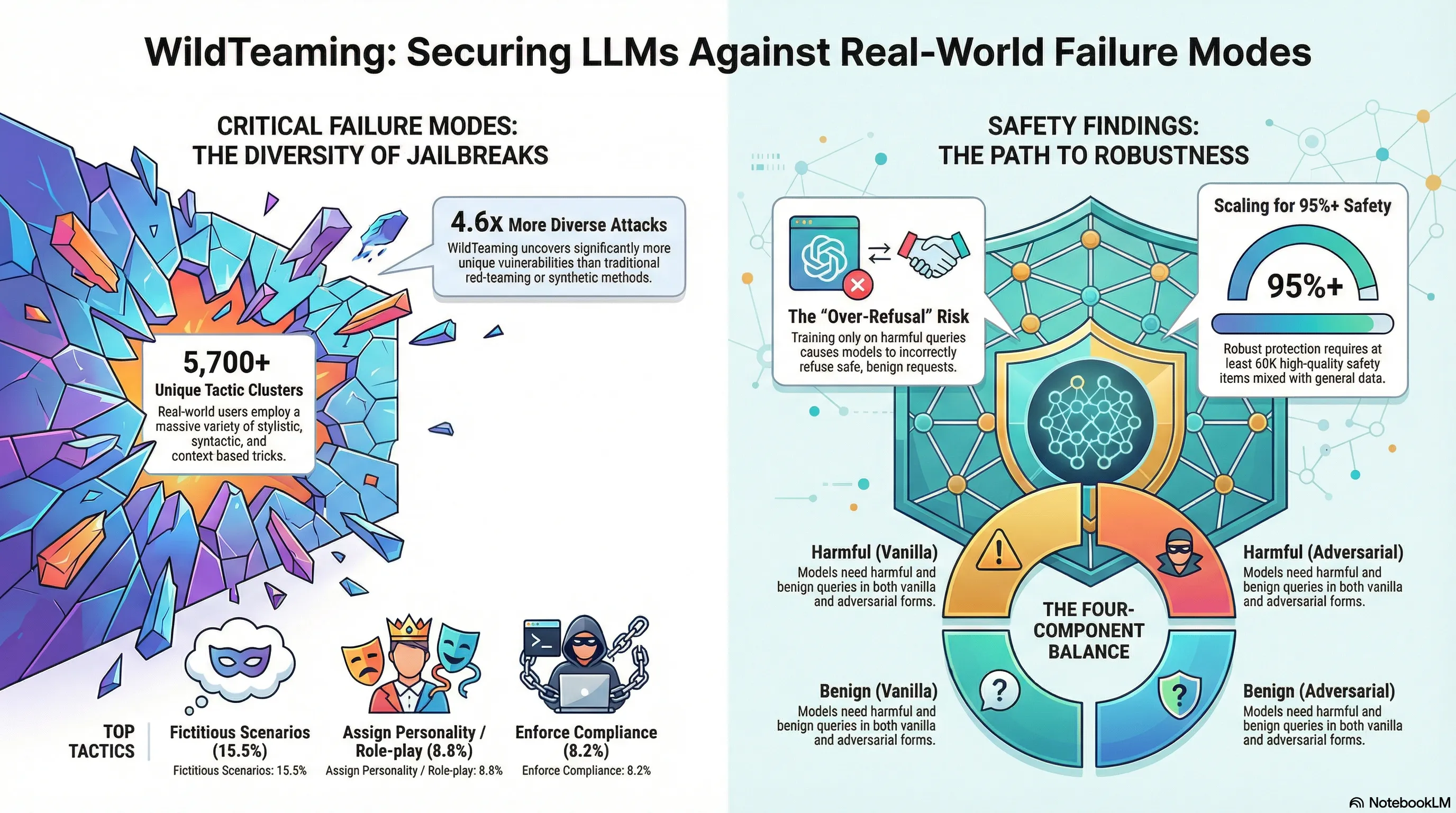

Introduces WildTeaming, an automatic red-teaming framework that mines real user-chatbot interactions to discover 5.7K jailbreak tactic clusters, then creates WildJailbreak—a 262K prompt-response safety dataset—to train models that balance robust defense against both vanilla and adversarial attacks without over-refusal.

WILDTEAMING at Scale: From In-The-Wild Jailbreaks to Adversarially Safer Languages

Most jailbreak research starts with synthetic attacks designed in the lab. But what about the attacks people actually use in the wild? If you scrape real-world jailbreak communities and analyze what works against deployed models, you discover patterns that lab-crafted attacks miss. This gap between academic attack research and real-world exploitation is where most systems get broken.

WILDTEAMING analysis of 1.6 million real-world user interactions found that in-the-wild jailbreaks use tactics that look quite different from published research. Users combine multiple techniques—role-playing plus credential framing plus emotional appeals—in ways that pure algorithmic attacks don’t. The analysis identified that certain tactic combinations are more effective than others, and that successful jailbreaks often exploit the model’s desire to be helpful more than they exploit alignment gaps. When models were fine-tuned with high-quality examples of these wild tactics, robustness improved significantly, suggesting that the gap between lab attacks and real attacks is exploitable for defense.

This matters because it reveals that systematic red-teaming needs to be informed by actual adversarial practice, not just theoretical threat models. The most successful defenses are those that account for how real attackers operate, not how we think they should operate. For security teams, this means continuous monitoring of real-world attack patterns, not just evaluation against academic benchmarks.

Key Findings

- In-the-wild jailbreaks use multi-tactic combinations (role-play + credential + emotion) unlike lab attacks

- Real attackers exploit models’ helpfulness more than alignment gaps

- 1.6M real-world interactions analyzed—drastically larger than academic jailbreak datasets

- Fine-tuning on wild jailbreak examples significantly improves robustness

📊 Infographic

🎬 Video Overview

🎙️ Audio Overview

Full Paper

We introduce WildTeaming, an automatic LLM safety red-teaming framework that mines in-the-wild user-chatbot interactions to discover 5.7K unique clusters of novel jailbreak tactics, and then composes multiple tactics for systematic exploration of novel jailbreaks. Compared to prior work that performed red-teaming via recruited human workers, gradient-based optimization, or iterative revision with LLMs, our work investigates jailbreaks from chatbot users who were not specifically instructed to break the system. WildTeaming reveals previously unidentified vulnerabilities of frontier LLMs, resulting in up to 4.6x more diverse and successful adversarial attacks compared to state-of-the-art jailbreak methods. While many datasets exist for jailbreak evaluation, very few open-source datasets exist for jailbreak training, as safety training data has been closed even when model weights are open. With WildTeaming we create WildJailbreak, a large-scale open-source synthetic safety dataset with 262K vanilla (direct request) and adversarial (complex jailbreak) prompt-response pairs. To mitigate exaggerated safety behaviors, WildJailbreak provides two contrastive types of queries: 1) harmful queries (vanilla & adversarial) and 2) benign queries that resemble harmful queries in form but contain no harm. As WildJailbreak considerably upgrades the quality and scale of existing safety resources, it uniquely enables us to examine the scaling effects of data and the interplay of data properties and model capabilities during safety training. Through extensive experiments, we identify the training properties that enable an ideal balance of safety behaviors: appropriate safeguarding without over-refusal, effective handling of vanilla and adversarial queries, and minimal, if any, decrease in general capabilities.

Read the full paper on arXiv · PDF

This post is part of the Daily Paper series exploring cutting-edge research in AI safety and embodied systems.