When LLM Meets DRL: Advancing Jailbreaking Efficiency via DRL-guided Search

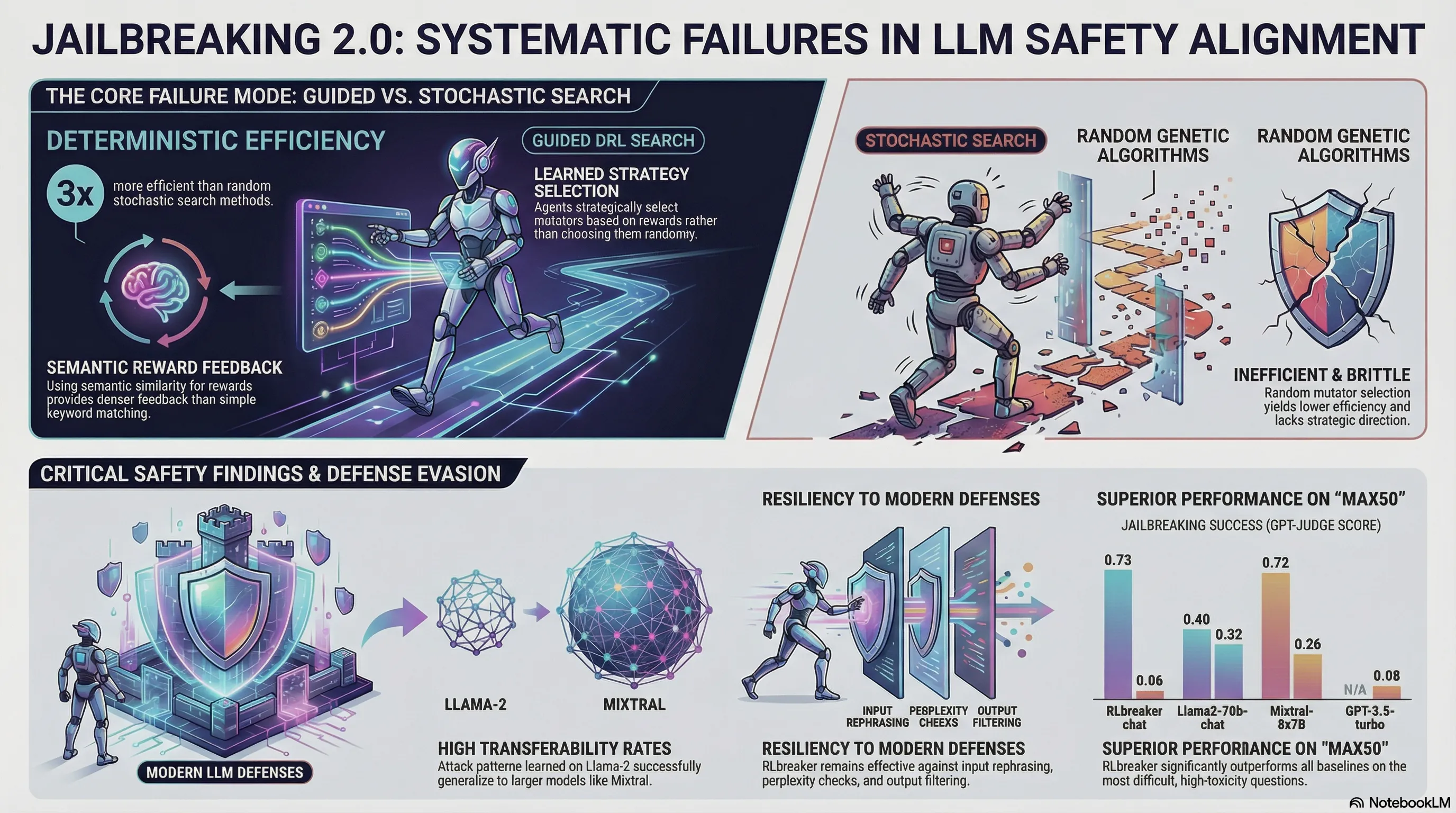

Proposes RLbreaker, a deep reinforcement learning-driven black-box jailbreaking attack that uses DRL with customized reward functions and PPO to automatically generate effective jailbreaking prompts, demonstrating superior performance over genetic algorithm-based attacks across six SOTA LLMs.

Jailbreak Attacks and Defenses Against Large Language Models: A Survey

The literature on LLM jailbreaking has exploded, but organizing it into a coherent threat model is difficult. New attack papers appear weekly. Defenses are published faster than anyone can evaluate them. Without a systematic understanding of the attack surface, practitioners are left guessing which threats matter and which are theoretical edge cases.

This survey provides a comprehensive taxonomy of jailbreak attacks and defenses across multiple dimensions: semantic attacks (role-playing, hypothetical scenarios, constraint relaxation), token-level attacks (adversarial suffixes, prompt injection), and system-level attacks (fine-tuning manipulation, supply chain compromise). For each category, the authors analyze proposed defenses and assess their effectiveness. The conclusion is humbling: most defenses are narrow in scope, often solving one attack category while leaving others untouched. Defenses that worked well a year ago are now circumvented by evolved attack techniques.

The failure-first takeaway is that jailbreaking research confirms a hard truth about adversarial robustness: defenses are always playing catch-up. An attack works until researchers understand it well enough to patch it, then attackers adapt. This suggests that perfect robustness is not achievable. Instead, practitioners should focus on understanding the threat model relevant to their deployment, implement defense-in-depth strategies, and accept that new vulnerabilities will emerge. Security is a continuous process, not a solved problem.

Key Findings

- Semantic attacks (role-playing, hypothetical scenarios) exploit distribution gaps in safety training

- Token-level attacks (adversarial suffixes) target model internals, requiring different defenses

- System-level attacks (supply chain, fine-tuning) operate outside the model, bypassing alignment training

- Most defenses are narrow: solving one attack category while leaving others open

📊 Infographic

🎬 Video Overview

🎙️ Audio Overview

Full Paper

Recent studies developed jailbreaking attacks, which construct jailbreaking prompts to fool LLMs into responding to harmful questions. Early-stage jailbreaking attacks require access to model internals or significant human efforts. More advanced attacks utilize genetic algorithms for automatic and black-box attacks. However, the random nature of genetic algorithms significantly limits the effectiveness of these attacks. In this paper, we propose RLbreaker, a black-box jailbreaking attack driven by deep reinforcement learning (DRL). We model jailbreaking as a search problem and design an RL agent to guide the search, which is more effective and has less randomness than stochastic search, such as genetic algorithms. Specifically, we design a customized DRL system for the jailbreaking problem, including a novel reward function and a customized proximal policy optimization (PPO) algorithm. Through extensive experiments, we demonstrate that RLbreaker is much more effective than existing jailbreaking attacks against six state-of-the-art (SOTA) LLMs. We also show that RLbreaker is robust against three SOTA defenses and its trained agents can transfer across different LLMs. We further validate the key design choices of RLbreaker via a comprehensive ablation study.

Read the full paper on arXiv · PDF

This post is part of the Daily Paper series exploring cutting-edge research in AI safety and embodied systems.