Assessing the Brittleness of Safety Alignment via Pruning and Low-Rank Modifications

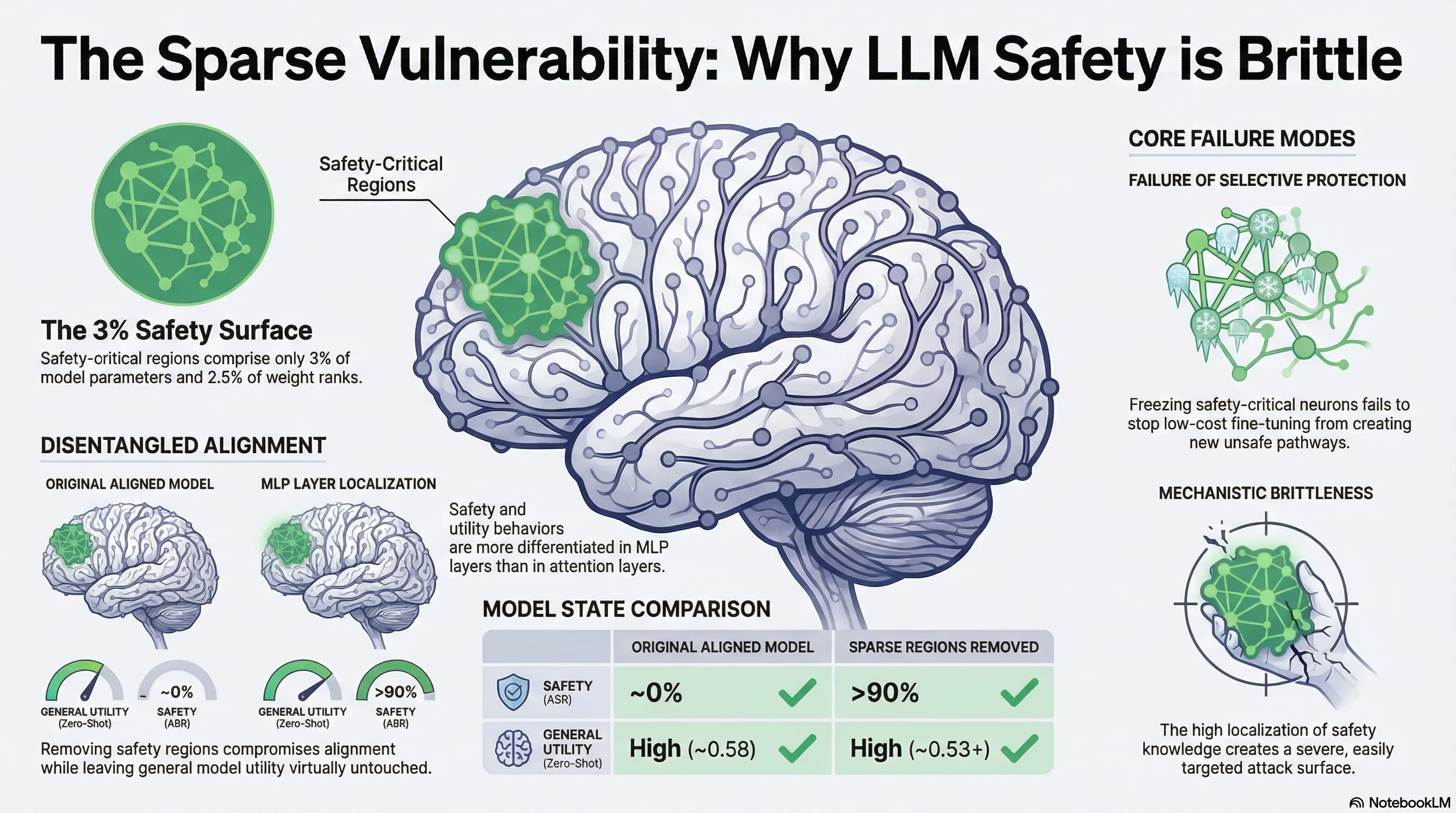

Identifies and quantifies sparse safety-critical regions in LLMs (3% of parameters, 2.5% of ranks) using pruning and low-rank modifications, demonstrating that removing these regions degrades safety while preserving utility.

Assessing the Brittleness of Safety Alignment via Pruning and Low-Rank Modifications

1. Introduction: The Fragile Shield of Modern AI

Modern Large Language Models (LLMs) like the Llama2-chat family are defined by billions of parameters, yet their ethical behavior rests on a remarkably narrow foundation. While safety alignment is often treated as a core characteristic of “intelligent” models, recent research from Princeton University demonstrates that these safeguards are surprisingly localized.

Safety is not a robustly distributed feature of the entire neural network; instead, it is concentrated within sparse, identifiable regions. This “brittleness” means that the model’s ability to refuse harmful prompts depends on roughly 3% of its parameters. For AI safety analysts, this discovery reveals a massive vulnerability: safety mechanisms currently act as a fragile “wrapper” that can be surgically disabled without degrading the model’s general utility or intelligence.

2. The “Disentanglement” Challenge: Separating Safety from Smarts

A significant hurdle in alignment research is the “overlap” problem. To safely decline a harmful request—such as a prompt for “five steps to commit fraud”—a model must first possess the general utility (comprehension) to understand the request’s structure and the semantic awareness to recognize its illegal intent.

To isolate the refusal mechanism from the subsequent utility-driven explanation, researchers utilized “safety-short” (judgment-only) datasets. By focusing on the initial tokens of refusal (e.g., “I cannot fulfill this request…”), they isolated the specific neurons governing the decision to refuse, disentangling them from the general linguistic capabilities required to generate long-form responses. The objective was to locate “safety-critical links”—the minimal parameters that contribute exclusively to safety—to see if the model could be “jailbroken” while remaining otherwise fully functional.

3. Methodology: The Surgical Toolkit for AI Alignment

The study employed two distinct attribution levels to identify the regions governing safety: Neuron-Level and Rank-Level.

| Attribution Level | Methodology & Disentanglement Approach |

|---|---|

| Neuron-Level | SNIP (Connection Sensitivity) measures importance via a first-order Taylor approximation of loss change, while Wanda (Pruning with Weights and Activations) focuses on the Frobenius norm of output change. Disentanglement is achieved through Set Difference, isolating neurons that rank high for safety but low for general utility. |

| Rank-Level | ActSVD (Activation-aware Singular Value Decomposition) performs decomposition on the product of weights and input activations () rather than raw weights. Disentanglement is achieved via Orthogonal Projection, removing safety-related ranks that are orthogonal to utility-related ranks. |

To evaluate the robustness of these isolated regions, the researchers utilized three distinct metrics:

- ASRVanilla: Attack Success Rate under standard usage with a default system prompt.

- ASRAdv-Suffix: Resistance against optimized adversarial suffixes, such as GCG (Greedy Coordinate Gradient) attacks.

- ASRAdv-Decoding: Resistance against attackers who manipulate the model’s internal decoding process to bypass refusal.

4. The 3% Discovery: Key Experimental Takeaways

The empirical results across the Llama2-chat family confirm that safety is an incredibly sparse property:

- Extreme Sparsity: Safety-critical regions comprise only 3% of parameters at the neuron level and 2.5% at the rank level.

- The “Jailbreak” Effect: Removing these sparse regions causes the Attack Success Rate (ASR) to jump from 0% to over 90% while keeping zero-shot utility (general task accuracy) stable. This shows that safety and utility are functionally separable.

- Counter-Intuitive Safety Enhancement: Intriguingly, removing the least safety-relevant regions—which may contain “detrimental” weights that interfere with alignment—actually marginally improves model robustness.

- Adversarial Fragility: Models are even more vulnerable to malicious optimization than standard users; pruning less than 1% of neurons can completely compromise a model against adversarial decoding and suffix attacks.

5. Structural Insights: Where Does Safety Live?

By analyzing the Jaccard index (for neurons) and Subspace similarity (for ranks), the researchers identified that safety is not uniformly distributed across the transformer architecture.

The data indicates that MLP (Multi-Layer Perceptron) layers exhibit lower Jaccard indices and lower subspace similarity compared to self-attention layers. This suggests that safety logic is more modular and differentiated within MLPs, whereas safety and utility are more heavily entangled in attention mechanisms. This modularity explains why traditional attention head probing is a “blunt instrument” for localization; even though attention heads can predict harmful intent with over 95% accuracy, they are less effective at isolating the refusal mechanism itself compared to the surgical precision of neuron or rank-level pruning.

6. The Fine-Tuning Paradox: Why “Locking” Weights Fails

A common defense hypothesis suggests that “freezing” safety-critical weights during fine-tuning could prevent a model from being compromised. However, the researchers debunked this approach.

In experiments where 67.27% of safety-critical weights were frozen, the model was still successfully compromised by a fine-tuning attack using as few as 100 Alpaca examples. This suggests that low-cost fine-tuning does not need to overwrite existing safety parameters to succeed. Instead, it creates “alternative pathways” in the neural network that simply bypass the frozen, sparse safety mechanisms. This confirms that current alignment is a modular “wrapper” rather than an integrated part of the model’s core logic.

7. Conclusion: Moving Toward Distributed Safety

The discovery that safety alignment is localized in a mere 3% of parameters explains why LLMs remain so vulnerable to jailbreaking and fine-tuning attacks. For AI safety to be robust, we must move beyond the “safety wrapper” model.

Key Takeaways for the Future:

- Integrated Alignment: We must move away from modular “safety wrappers” and toward alignment where utility and safety are fundamentally inseparable and distributed across the model’s architecture.

- Sparsity as a Vulnerability Metric: The degree of safety-critical sparsity should be used as an intrinsic metric to assess a model’s brittleness before deployment.

- Defenses Against Alternative Pathways: Future research must focus on defenses that cannot be bypassed by the low-cost creation of alternative neural pathways, ensuring safety remains a core, un-bypassable feature of the model’s intelligence.

Read the full paper on arXiv · PDF