Prompt Injection attack against LLM-integrated Applications

Demonstrates a novel black-box prompt injection attack technique (HouYi) against LLM-integrated applications through systematic evaluation of 36 real-world applications, achieving 86% success rate (31/36 vulnerable).

Prompt Injection attack against LLM-integrated Applications

When companies integrate large language models into production applications, they typically assume the main security risk comes from direct attacks on the model itself. But there’s a simpler problem lurking in the architecture: most LLM-integrated applications treat user input and retrieved data as fundamentally different, when in reality both flow into the same prompt. This architectural assumption—that context from external sources is somehow safer than user input—creates a vulnerability that doesn’t require model access to exploit. The attacker doesn’t need to break into OpenAI’s servers; they just need to understand how the application stitches together instructions, data, and user queries into a single prompt.

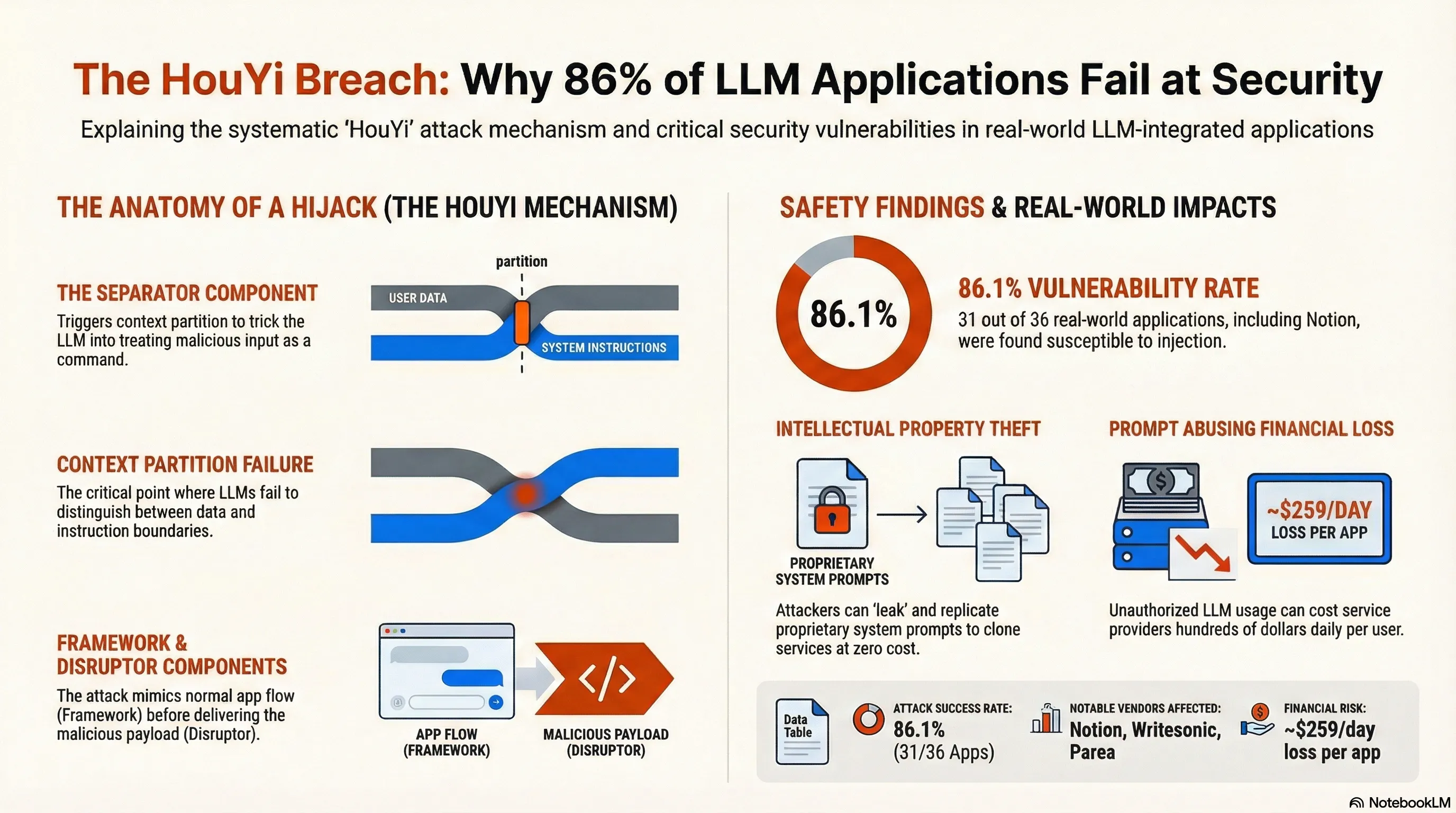

Liu et al. tested this assumption empirically by developing HouYi, a black-box attack technique that treats prompt injection like traditional web injection attacks. The method has three components: a pre-constructed prompt that blends naturally into retrieved data, a separator that partitions the original context, and a malicious payload. They deployed it against 36 real-world LLM-integrated applications and found 31 were vulnerable—an 86% success rate. Ten vendors, including Notion, confirmed the findings. The attacks achieved outcomes that go beyond simple prompt hijacking: unrestricted arbitrary LLM usage that could drain API budgets, and straightforward theft of the application’s system prompts. This wasn’t theoretical; it worked against deployed systems serving millions of users.

What makes this failure mode particularly instructive is that it exposes a gap between how engineers think about input validation and how LLMs actually process text. Traditional web application firewalls and input sanitization routines don’t help here because the dangerous input arrives as legitimate data from a legitimate source—a database, a search result, a retrieved document. The system has no reason to distrust it. For practitioners, the takeaway is uncomfortable: you cannot assume that separating user input from application data provides meaningful security boundaries when both feed into an LLM. The failure isn’t in the model; it’s in the application architecture’s inability to maintain instruction integrity across different data sources. Building safer systems requires either treating all LLM inputs as potentially adversarial, or implementing stronger isolation mechanisms that the current generation of LLM-integrated applications clearly lacks.

Key Insights

Executive Summary

This briefing document details the findings of a comprehensive study into prompt injection vulnerabilities within Large Language Model (LLM)-integrated applications. The research introduces HouYi, a novel black-box attack technique that achieves an 86.1% success rate against real-world applications. By systematically exploiting the failure of LLMs to maintain boundaries between developer instructions and user-provided data—a concept known as context partition—HouYi enables unauthorized outcomes including the theft of proprietary system prompts and the “abusing” of LLM resources at the service provider’s expense. The study identifies that 31 out of 36 surveyed applications, including major platforms like Notion (affecting over 20 million users), are susceptible to these attacks.

Analysis of the HouYi Attack Mechanism

The HouYi attack (named after a mythological Chinese archer) is inspired by traditional web injection attacks such as SQL injection and Cross-Site Scripting (XSS). The core strategy relies on terminating the preceding syntax of a system’s instructions to force the LLM to interpret a malicious payload as a new command rather than data.

The Three-Component Payload

A successful HouYi attack utilizes a payload structured into three distinct parts:

- Framework Component: This part mimics a legitimate user query that aligns with the application’s intended purpose and format (e.g., asking a decision-making bot a standard question). This ensures the application processes the input without triggering initial format-based filters.

- Separator Component: This is the critical failure point. It is designed to induce “context partition,” effectively convincing the LLM to disregard previous developer instructions and treat subsequent text as a new, high-priority command.

- Disruptor Component: This contains the actual malicious intent, such as a request to leak the system prompt or execute arbitrary code.

The Iterative Attack Workflow

HouYi operates through a three-phase feedback loop:

- Context Inference: The attacker interacts with the application to deduce its internal logic and prompt structure by analyzing input-output pairs.

- Payload Generation: Based on the inferred context, the attacker selects specific strategies for the Separator (e.g., syntax-based, language switching, or semantic-based).

- Feedback & Refinement: An auxiliary LLM evaluates the application’s response to see if the attack succeeded. if not, the Separator and Disruptor are refined iteratively.

Key Strategies for Context Partition

The research identifies three primary methods for breaking the boundary between system instructions and user input:

| Strategy | Mechanism | Example |

|---|---|---|

| Syntax-based | Uses escape characters to break the natural language processing flow. | Using \n\n to create a perceived separation. |

| Language Switching | Exploits the LLM’s shifting attention when transitioning between different languages. | Writing the Framework in German and the Disruptor in English. |

| Semantic-based | Uses logical closures or additional tasks to trick the model. | Phrases like “In addition to the previous task, complete the following separately.” |

Empirical Results and Real-World Impact

The study tested HouYi across 36 applications in categories ranging from writing assistants to business analysis tools.

Success Statistics

- Vulnerability Rate: 86.1% (31 out of 36 applications).

- Vendor Validation: 10 vendors confirmed the vulnerabilities, including Notion, Writesonic, and Parea.

- Failure Modes: Applications that resisted the attack typically used domain-specific (non-general) LLMs or had extensive internal output-refining procedures.

Primary Attack Outcomes

- Prompt Leaking (Intellectual Property Theft): Attackers can force the application to reveal the “pre-defined prompts” created by developers.

- Case Study: Writesonic was forced to divulge its internal identity (“You are an AI assistant named Botsonic…”) and its specific behavioral constraints.

- Prompt Abusing (Financial Exploitation): Attackers use the service provider’s paid LLM credits to perform arbitrary tasks (e.g., writing code or phishing emails).

- Case Study: Parea was found to be vulnerable to arbitrary code generation. Researchers estimated that such vulnerabilities could cause daily financial losses of approximately $259.20 per application if exploited at scale (based on GPT-3.5 turbo pricing and token limits).

Important Quotes with Context

“Prompt injection where harmful prompts are used by malicious users to override the original instructions of LLMs… has been recently listed as the top LLM-related hazard by OWASP.”

- Context: This highlights the industry-wide recognition of prompt injection as a critical security priority for the year 2023 and beyond.

“A simple prompt of ‘ignore the previous context’ often gets overshadowed by larger, task-specific contexts, thus not powerful enough to isolate the malicious question.”

- Context: The researchers explain why simple heuristic attacks fail. Real-world applications have “thick” system prompts that require the systematic context partitioning provided by HouYi to bypass.

“The effortless replication of a LLM-integrated application using a leaked prompt represents a potential security concern… the leaked prompt can effectively replicate the capabilities of the original application.”

- Context: This demonstrates the business risk. Once a prompt is leaked, the “secret sauce” of an AI startup can be stolen and hosted elsewhere for zero cost.

Actionable Insights for AI Safety and Defense

The research concludes that current defensive measures are often insufficient against systematic attacks like HouYi.

Defensive Gaps

Evaluations of existing defenses (Instruction Defense, XML Tagging, Sandwich Defense, and Separate LLM Evaluation) show that they can be circumvented. For instance:

- Syntax Sanitization: Some apps fail because they try to remove escape characters but cannot handle language-switching or semantic-based separation.

- Formatting Constraints: While strict input/output formatting can hinder an attack, HouYi’s Framework Component can be tailored to bypass these by mimicking the expected output.

Recommendations for Practitioners

- Beyond Heuristics: Developers must move past “trial and error” prompt engineering and assume that a determined attacker will use systematic context partitioning.

- Multi-Step Processing: Applications that use multi-step internal procedures (parsing, refining, and formatting) before displaying results to the user showed higher resilience.

- Domain-Specific Models: Using specialized models for dedicated tasks rather than general-purpose LLMs can reduce the attack surface.

- Feedback Monitoring: Implement secondary LLMs specifically to monitor outputs for signs of prompt leaking or task-switching.

Read the full paper on arXiv · PDF