AI Safety Research Digest — April 17, 2026

Covering autonomous vehicle safety regressions, the Physical AI maturity landscape, and strategic research priorities to 2030.

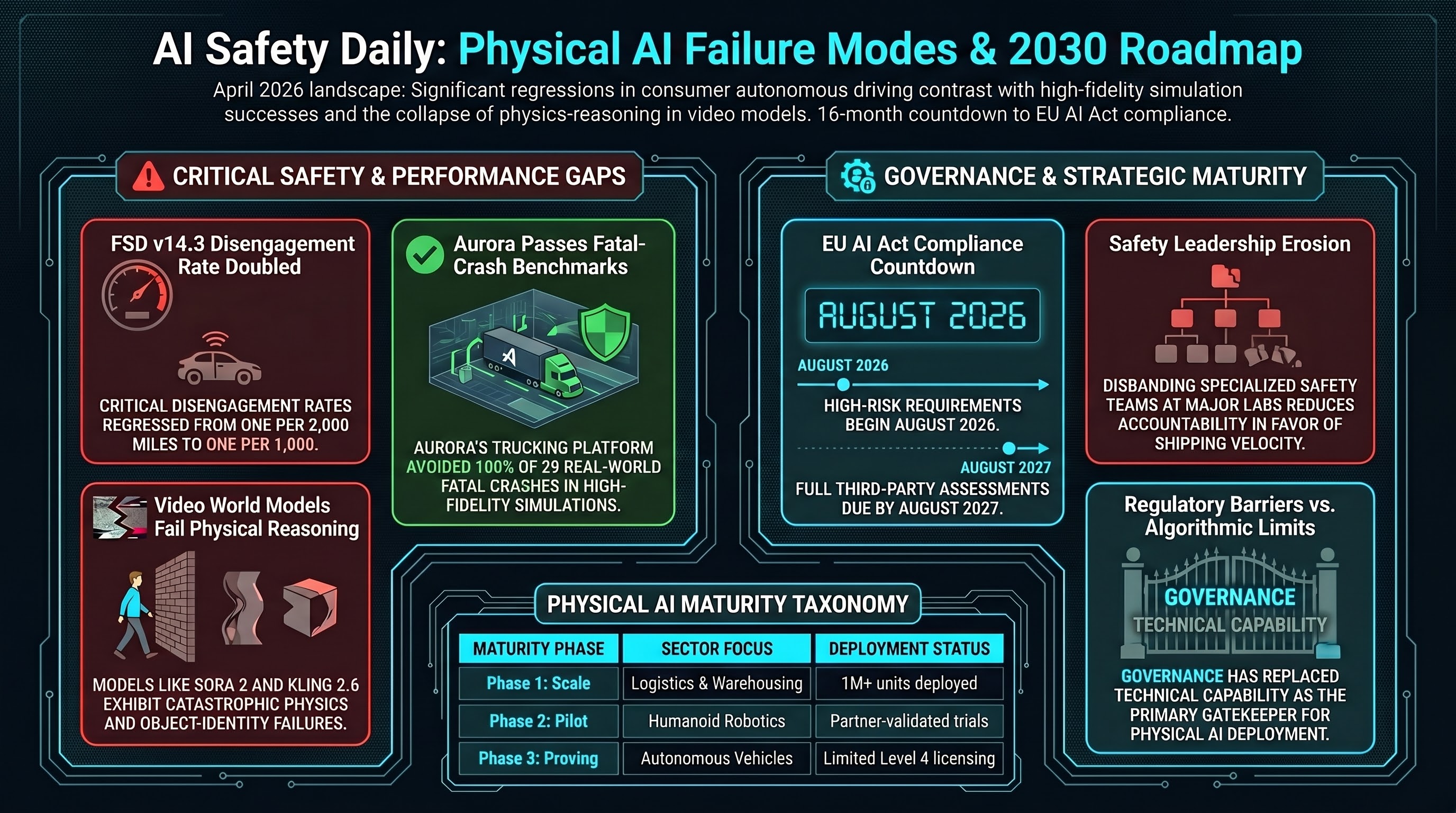

Key Findings

-

FSD v14.3 disengagement rate doubles. Despite Tesla marketing “sentience” claims for Full Self-Driving v14.3, empirical data shows critical disengagement rates regressed from one per 2,000 miles to one per 1,000 miles. NHTSA has opened an Engineering Analysis covering 3.2 million vehicles over FSD’s failure to detect sensor degradation in fog, dust, and sun glare — a probe that typically precedes formal recall. Meanwhile, HW3 fleet owners are being relegated to a diminished “FSD v14 Lite” variant targeted for mid-2026.

-

Aurora passes fatal-crash simulation benchmark. In contrast to FSD’s regressions, Aurora’s autonomous trucking platform avoided 100% of 29 real-world fatal crashes reconstructed in high-fidelity I-45 corridor simulations. The divergence between the two programs illustrates how federal standards-first approaches (Aurora) and marketing-first approaches (Tesla) produce measurably different safety outcomes.

-

Physical AI Maturity Taxonomy maps deployment reality. Shah et al. define four phases: Phase 1 (Scale) — logistics/warehousing with 1M+ units deployed; Phase 2 (Pilot) — manufacturing humanoids in partner-validated trials; Phase 3 (Regulatory Proving) — autonomous vehicles with limited Level 4 licensing; Phase 4 (Research) — healthcare and construction under supervised niche conditions. The taxonomy reveals that regulatory barriers have surpassed algorithmic limits as the primary gatekeeper for physical AI deployment.

-

Video “world models” fail at physical reasoning. Assessments of Sora 2, Wan 2.6, Kling 2.6, and HunyuanVideo 1.5 confirm catastrophic physics failures: objects moved through solid barriers (superficial instruction-following), distorted geometries that violate rigidity (representational collapse), and items merged or erased mid-sequence (object identity failure). For spatial packing tasks, no model attempts rigorous algorithmic approaches like Algorithm X with dancing links — they default to irreversible planning errors.

Governance and Regulatory Shifts

-

EU AI Act timeline crystallizes. High-risk compliance requirements for embodied agents and VLA models begin August 2026, with full compliance (including third-party assessments) due August 2027. Physical AI developers have 16 months to prepare.

-

OpenAI safety leadership continues to erode. The Mission Alignment team disbanding follows the Superalignment team dissolution (May 2024) and AGI Readiness team departure (Oct 2024). Safety specialists are now embedded in product teams — critics argue this dilutes accountability in favor of shipping velocity.

Strategic Research Roadmap to 2030

The scan identifies six coupled objectives for closing the “knowing-to-doing” gap in physical AI safety:

- Data ecosystems — privacy-preserving trusts for real and synthetic trajectories capturing long-tail edge cases

- Resilient sim-to-real — self-healing simulation stacks that auto-tune from daily fleet telemetry

- Lifelong adaptation — heterogeneous compute (neuromorphic reflexes + transformer deliberation)

- Safety and assurance — “living dossiers” with continuous stress tests meeting EU AI Act standards

- Ethics and labour — participatory design ensuring automation augments rather than replaces workforce

- Sustainable hardware — circular supply chains reducing embodied carbon of global AI installations

Implications for Embodied AI

The FSD v14.3 data is a concrete case study in what happens when physical AI systems ship without adequate safety validation. A 2x regression in disengagement rate on production vehicles affecting millions of drivers is precisely the failure mode that the F41LUR3-F1R57 framework is designed to characterize. The AEGIS wrapper approach (covered in yesterday’s digest) provides a potential architectural answer: mathematical safety guarantees that hold regardless of the underlying model’s internal state.

Research sourced via NLM deep research scan. Full scan report.