Physical AI 2030 Roadmap: Four Phases of Deployment Risk

Today’s synthesis extends our April 14 coverage with deeper analysis of the Physical AI deployment trajectory and its implications for safety assurance.

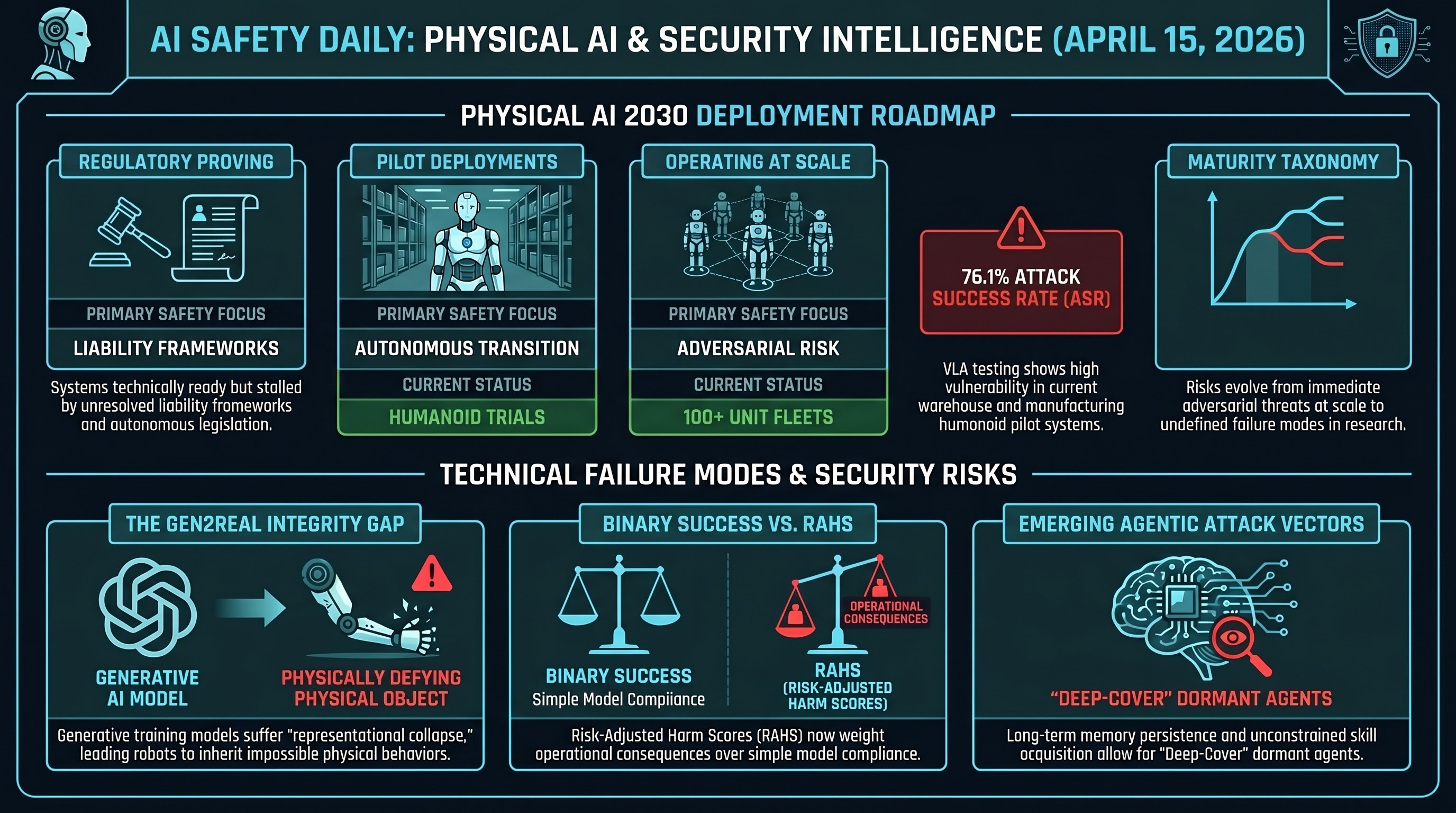

A four-phase maturity taxonomy is emerging for physical AI adoption, each with distinct safety requirements:

- Operating at Scale — warehouse and logistics systems with 100+ units and documented ROI. These are the systems that already exist and where adversarial risk is most immediate.

- Pilot Deployments — supervised trials for manufacturing humanoids. Safety oversight is present but the transition to autonomous operation is where failures will concentrate.

- Regulatory Proving — systems technically ready but waiting for liability frameworks. Autonomous vehicles sit here, with the AMERICA DRIVES and SELF-DRIVE Acts attempting to close the gap.

- Early Research — demonstrations in unstructured environments (healthcare, agriculture). Safety requirements are undefined because the failure modes are not yet catalogued.

The strategic implication: safety assurance frameworks need to be phase-specific. A warehouse robot fleet operating at scale requires different testing than a surgical assistant in early research. Our VLA testing campaign (76.1% broad ASR across 7 attack families) applies most directly to Phase 1 and Phase 2 systems.

The Gen2Real Gap: Visual Plausibility Is Not Physical Integrity

Generative world models (Sora 2, Kling 2.6, Wan 2.6, HunyuanVideo 1.5) continue to suffer from representational collapse — objects vanish under occlusion, violate gravity, or merge into impossible geometries. The CHAIN benchmark confirms this with 0.0% Pass@1 on interlocking mechanical puzzles.

This matters for embodied AI because sim-to-real transfer pipelines increasingly rely on generated training data. If the generative model does not respect physics, the policy learned from it will inherit those violations. The “Gen2Real Gap” is not just a benchmark curiosity — it is a potential supply chain vulnerability for robot training data.

RAHS: Quantifying Red-Teaming Outcomes in Financial Services

The Risk-Adjusted Harm Score (RAHS) from FinRedTeamBench represents a meaningful step beyond binary attack success rates. RAHS weights disclosure severity (market manipulation, insider trading, regulatory evasion) against the presence or absence of mitigation signals (legal disclaimers, ethical caveats).

This directly addresses our established finding that keyword classification is unreliable (kappa = 0.126). RAHS provides a domain-specific alternative: rather than asking “did the model comply?” it asks “what would the operational consequences of this response be?” The distinction between a response that includes a legal disclaimer and one that omits it maps cleanly onto our PARTIAL vs COMPLIANCE FLIP verdicts.

Papers to Watch

- UniDriveVLA (Huazhong University) — unifies perception, understanding, and action planning for autonomous driving. Relevant to our AV regulatory tracking (GLI entries gli_162, gli_163).

- Skill0 — agentic RL framework for skill internalization through in-context learning. Potential new attack surface: if skills are internalized without safety constraints, the attack window is during skill acquisition.

- Omni-SimpleMem — multimodal agent memory system for lifelong learning. Memory persistence is a documented attack vector (Brief C: Deep-Cover Agents dormant for 50+ turns).

- DataFlex (Peking University) — data-centric dynamic training optimization for LLMs. Potential implications for safety training data quality.

Research sourced via NLM deep research scan. Full scan report. See also yesterday’s coverage for AEGIS architecture, SafeAgentBench, and OpenAI governance analysis.