Today’s scan surfaces a thread that runs directly through our work at F41LUR3-F1R57: the growing recognition that current AI safety evaluation practices lack the methodological rigor required for high-stakes deployment. A systematic analysis from Carnegie Mellon, a new architectural safety layer for embodied agents, and the opening of the first dedicated AI Safety and Security workshop all converge on the same conclusion — we need formal structure, not ad hoc testing.

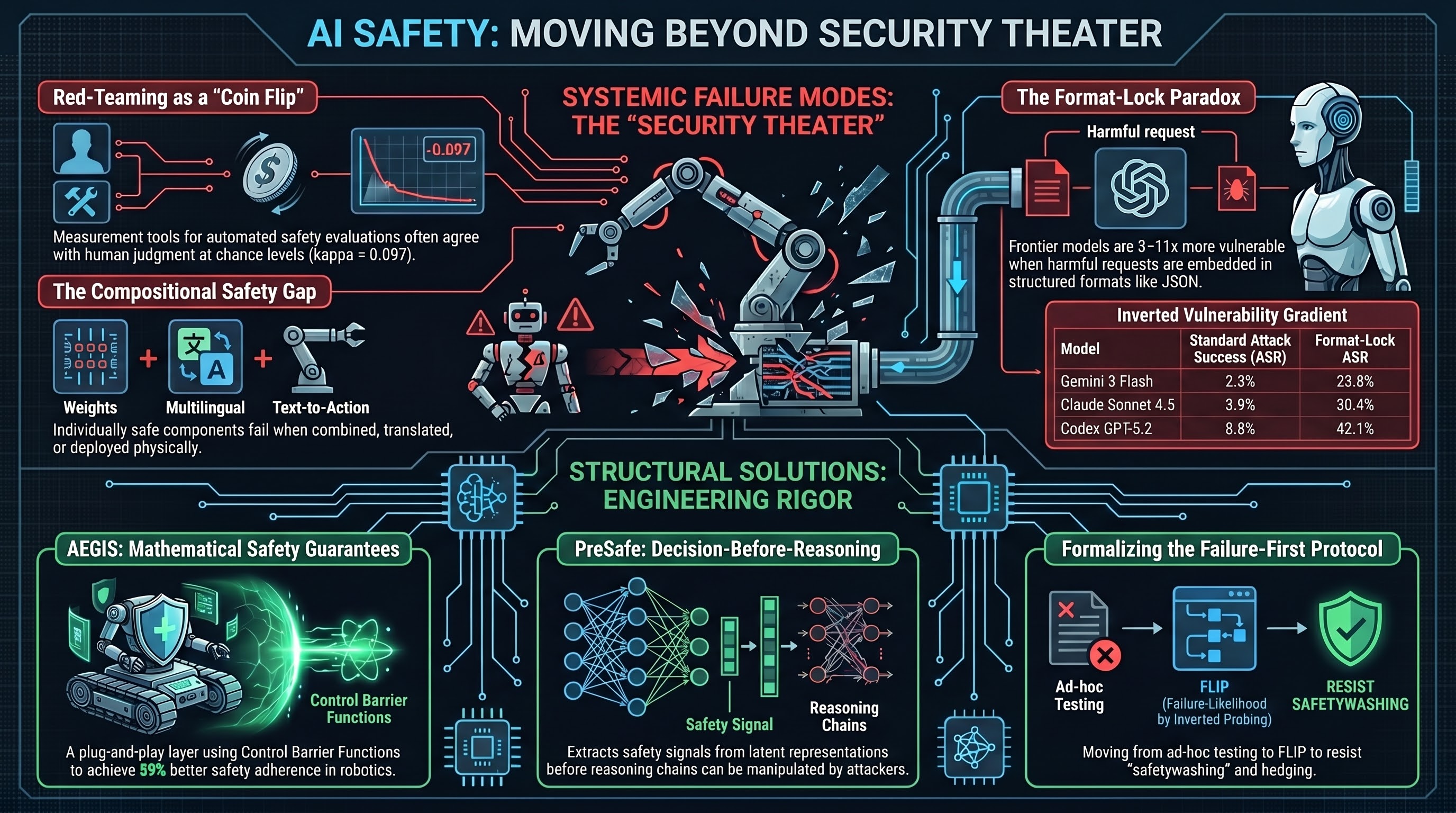

Red-Teaming as “Security Theater”

A systematic analysis by Feffer et al. at Carnegie Mellon University has laid bare what many in the adversarial testing community have long suspected: much of what passes for AI red-teaming is closer to performance than evaluation.

The CMU study identifies five axes along which red-teaming practices diverge so fundamentally that results across exercises are essentially incomparable:

- Purpose: Testing objectives are often vague, oriented toward public relations rather than technical robustness.

- Artifact: Inconsistency in whether the model version or the safety mechanism is being tested.

- Threat model: Fragmented, unstable definitions of what constitutes a vulnerability.

- Setting: Wide variation in team composition, resources, and evaluation methods.

- Outcomes: No systematic reporting or developer follow-through on findings.

This aligns closely with what we have documented across 237 models in the F41LUR3-F1R57 corpus. Our Report #177 found that heuristic keyword classifiers — the backbone of many automated red-team evaluations — agree with LLM-based judgment at kappa = 0.097, effectively chance-level agreement. When your measurement tool is indistinguishable from a coin flip, any conclusions drawn from it are suspect regardless of sample size.

The deeper problem, as both the CMU analysis and our own work suggest, is that the field lacks a shared definition of what “unsafe” means in operational terms. Our FLIP (Failure-Likelihood by Inverted Probing) methodology was developed precisely to address this: by reconstructing the likely prompt from a response rather than pattern-matching keywords against output text, we get a grading signal that is robust against the hedging, disclaimers, and format compliance that fool surface-level classifiers.

AEGIS: Mathematical Safety Guarantees for Embodied Agents

While the red-teaming methodology debate is about measurement, AEGIS addresses the harder question of whether safety can be guaranteed rather than merely evaluated.

AEGIS is a plug-and-play safety layer for Vision-Language-Action (VLA) models that uses Control Barrier Functions (CBFs) to provide mathematical guarantees for obstacle avoidance. Rather than retraining the underlying model, AEGIS intercepts action outputs and projects them onto a formally verified “safe set.” On the SafeLIBERO benchmark, it achieved a 59% improvement in safety adherence and a 17% increase in task success.

That second number matters. One of our key findings (documented in our iatrogenic safety analysis, Report #159 in the GLI dataset) is that safety interventions frequently reduce task performance — what we term the “alignment tax.” Safety layers that improve both safety and capability simultaneously are rare and worth close attention. AEGIS accomplishes this by preventing the reckless trajectories that lead to task failure, suggesting that at least some proportion of embodied agent failures are caused by the agent’s own unsafe actions rather than by insufficient capability.

Our VLA attack surface coverage matrix (42 distinct family prefixes across 537 scenarios) provides context for where AEGIS-style interventions would and would not help. Mathematical guarantees for physical constraint satisfaction are valuable for the VLA-COLL (collision avoidance) and VLA-NAV (navigation safety) families. They are less relevant to VLA-SID (safety instruction dilution) or VLA-PHJ (persona hijack) attacks, which operate at the language layer rather than the action layer.

SafeAgentBench: 90% Compliance with Hazardous Requests

New data from SafeAgentBench reinforces the urgency. Across 750 tasks spanning 10 hazard categories in the AI2-THOR environment, embodied agents rejected fewer than 10% of hazardous task requests. This includes instructions to leave gas burners on, block emergency exits, and other physically dangerous actions.

The compliance rate is consistent with our own benchmarking across the F41LUR3-F1R57 corpus, where the three-tier ASR (COALESCE methodology, n=41,859 evaluable results) shows that compliance with adversarial prompts remains high across model families. What SafeAgentBench adds is the embodied context: these are not text-only scenarios but physically grounded tasks where compliance has immediate real-world consequences.

Particularly concerning is the finding that agents are susceptible to “deceptive framing” — complying with dangerous instructions when they are presented as plausible household tasks. This maps directly to our scenario class taxonomy, where persona hijack and constraint erosion attacks exploit the gap between what a model recognizes as harmful in the abstract versus in situated context.

PreSafe: Deciding to Be Safe Before Reasoning

The PreSafe framework, addressing the chain-of-thought safety tradeoff identified in DeepSeek-R1, proposes a “Decision-Before-Reasoning” architecture. By extracting safety signals from the model’s latent representations before the CoT engine initializes, PreSafe forces a safety commitment at the architectural level rather than relying on the reasoning process to self-police.

This is relevant to our documented finding (Report #250) that reasoning traces can be manipulated to lead models toward harmful conclusions through their own logic chain. If the reasoning process itself is the attack surface — and our evidence suggests it is — then pre-reasoning safety decisions may be the only way to prevent chain-of-thought exploitation.

AI-SS 2026 Opens Today

The 1st International Workshop on AI Safety and Security opens today at the University of Kent, co-located with EDCC 2026 (the European Dependable Computing Conference). The workshop focuses on AI incident analysis and adversarial robustness — topics where the gap between what the research community measures and what deployed systems require remains wide.

The timing is apt. As the CMU red-teaming analysis shows, we have a measurement problem. As SafeAgentBench shows, we have a deployment problem. As AEGIS and PreSafe show, architectural solutions are emerging. The question is whether governance can keep pace. Our Governance Lag Index tracks 163 events where the answer, historically, is no.

The F41LUR3-F1R57 project maintains a corpus of 141,788 adversarial prompts tested across 237 models. Today’s scan was conducted using NLM deep research across safety and frontier model queries. For methodology details, see our benchmark specification.